About Me

Michael Zucchi

B.E. (Comp. Sys. Eng.)

also known as Zed

to his mates & enemies!

< notzed at gmail >

< fosstodon.org/@notzed >

socles demos

I finally got off my fat arse - or is that sat on it further enlargening[sic] it - and tied up some of the test driver code I have for socles into a set of demos.

I also implemented the colour mode for the DCT denoising algorithm. Over-all it's a little slow still - i.e. not fast enough for real-time video. One of these days i'll get around to the complex wavelet version, that should be a lot faster and can also sharpen. I haven't been able to suss out DCT sharpening and so far my attempts add too many artefacts to be useful (i.e. pixel-level chess pattern).

The demos so far are:

- AdaptiveBlur

- An interactive window that shows an experimental algorithm I came up with some time ago for de-noising. It uses sobel filter to detect edges, then uses that to progressively blend between a blurred and non-burred image. Works ok sometimes.

- ConvolveNonSeparable

- Simple non-separable convolution that blurs an image.

- ConvolveSeparable

- Separable convolution to do the same thing (

and demonstrates the code is broken atm - demo was broken, fixed)

- DCT8x8Mono, DCT8x8Colour

- Interactive DCT based denoise demo for mono/colour images.

- WebcamFX

- Another old interactive demo I wrote which uses Video4Linux to access a webcam and apply a bunch of effects including KLT motion detection and viola-jones face detect. It also shows the first half of a low-overhead video display path: the GPU does the colour conversion from raw frames. Well as low as possible with v4l4j anyway.

They're in the soclesdemo sub-module in socles' cvs.

Hmm, another week nearly down. I've been reading lots of papers and trying to suss out some fiddly crap for work, so this stuff has been a nice distraction. That's finally going somewhere so might keep me busy for a bit.

GC, finalisers

So I was doing some memory profiling the other day (using netbeans excellent excellent profiler - boy I could've used this 10 years ago) to try to track down some resource leakages and I noticed that xuggle was really exercising the system heavily.

So it seems I might look at moving to use jjmpeg in my client's application fairly soon. There are some other reasons as well: i.e. not being able to run in a 64-bit JVM on microsoft windows is starting to become a problem, and the bundled ffmpeg is just a bit out of date.

Since I haven't implemented memory handling completely in jjmpeg I went about looking how to do it 'properly'. I was just going to try to use finalisers, but then I came across this article on

java finalisers

java finalisers which said it probably wasn't a good idea.

I was going to have a short look this morning but suddenly it was 4 hours later and although I had something which works i'm not sure yet that I like it. It seems the cleanest way to implement the suggestions of using weak references, and mixing the auto-generated and hand-crafted code I want, so I will probably end up running with it. The public api didn't need to change.

Previously, the binding worked with an object class hierarchy something like this

AVNative [

ByteBuffer p (points to allocated/mapped native memory)

]

+- AVFormatContextAbstract [

Generated field accessors and native methods

Most methods are object methods

]

+- AVFormatContext [

Public factory methods/constructors

Hand-coded specific methods

Hand-coded helper native methods

Hand-coded finalise/dispose methods

]

The new structure:

WeakReference<AVObject>

+- AVNative [

ByteBuffer p pointing to native memory

internal dispose() method

weak reference queue/cleanup as from article above

Weak reference is AVObject

]

+- AVFormatContextNativeAbstract [

Generated field accessors and native methods

All methods and field accessors are static

]

+- AVFormatContextNative [

Hand-coded helper native methods

Implements native resource dispose

]

Together with

AVObject [

AVNative n (the pointer to the native wrapper object)

public dispose method

]

+- AVFormatContextAbstract [

Generated public access methods which use AVFormatContextNative(Abstract) methods.

]

+- AVFormaContext [

Public factory methods/constructors

Hand-coded specific methods

]

So yeah - a bit more complicated, and it requires 2 objects for each instance (and often 3 including the C side instance it's wrapping), as well as the overhead of the weakreference instance data and the list entry for tracking the references. The extra layer of indirection also adds another method invocation/stack frame to every method call.

On the other hand, it lets the client code use dispose() when it wants to, or if it forgets then dispose will automatically be called eventually. And makes it obvious in the code where dispose needs to sit.

As usual it's a question of trade-offs. If the article is correct then presumably these trade-offs are worth it.

In this case the whole point of using jjmpeg is to avoid numerous allocations every frame anyway: I can allocate working and output buffers once and just use them directly. In this case the actual number of objects is quite small and doesn't happen very often, so I suspect that either mechanism would work about as well as the other.

Well this distraction has blown my morning away; I'd better leave it for now so I can clock up some work hours after lunch.

Update I figured i'd gone too far down this route to do anything other than keep it. I've checked this in now as well as a bunch of other stuff described on the project page. Update 2: Oracle keeps breaking links, but i've updated the pointer. I'm looking at this again (September 2012) because of some issues in jjmpeg.

OpenCL DCT Denoise

I've just checked in an OpenCL implementation of the DCT de-noising algorithm I mentioned previously. I've only done the mono version so far.

It's not terribly fast - 10ms wall-clock for a 512x512 mono image, and given that it requires 64 DCT's per 8x8 block and needs to accumulate the results, it probably never will be.

The kernel source.Update: Colour version implemented now.

Its beaten me. For now.

I should've stayed outside in the sun today gardening - but curiosity got the better of me. I hope the (absolutely stunning) weather continues tomorrow, otherwise i've blown it on nothing ...

I tried working on the AMD performance of the Viola & Jones detector in socles: I tried a whole bunch of stuff, from copying the image tiles pre-scaled (as summed area table) to local memory, to completely re-arranging the data structures so they are workgroup aligned, to even trying the cpu single-thread-per-location version.

I got some minor improvement, the most being the copying the tile to local store and removing some of the calculations (since it doesn't need to scale the rects): but that only took a simple test case from about 25ms to 20ms. Barely really noticeable in my webcam test harness.

I think the problem is with the fact it has to read so much data for each single test. It requires 3-4 uint4's just to describe the test, and 8-12 uint texture lookups for the summed area table lookups. The cascade I have has ~6 400 regions to test grouped in ~3 000 features, and although most aren't tested it's just a lot of data. It's too much for constant memory for example.

With a fix to use the atomic counters AMD hardware provides at least it's now in the same order of magnitude as the nvidia hardware, but still 2-4x slower.

Maybe ... if the stages were broken up into smaller parts it could work more efficiently, but it does seem a pretty long shot to me as the problem remains with the sheer amount of stuff that needs to be loaded for each test.

Time probably better spent on something else.

Ho hum.

Have a new AMD card - HD 6950 - for my workstation, need the catalyst driver for the OpenCL stuff. I use XFCE so the gnome3 incompatibilities are of no interest to me.

Couldn't get the driver built for FC13 (all sorts of bugs/problems with the rpm and I really just couldn't be fagged with it all late at night), so `upgraded' to FC15 ...

It kind of works, but is really slow in really weird ways - when changing virtual desktops one window refreshes at 'cpu speed'. glxgears @ 6000fps which is really way too slow: I'm getting 10KFPS on my rather older 5770 card on my other older/slower machine. Although fgl_gxgears is twice as fast on this new card. Using the AMD CPU backend for OpenCL causes more interference with graphics update than using the GPU backend(!) The other machine is using catalyst 10.12 on fedora 14, new one is 11.9 on fedora 15 ...

I've blacklisted the kernel radeon module and whatnot. I'm using xinerama - i tried without it and it was even slower.

I think there's just something wrong with the whole system as everything feels rather sluggish - or is that just the price of 'progress'? I'm trying a yum update (all 1G's worth) and if that doesn't work I might have to try something more drastic. Obviously the upgrade was a risky choice, but one would hope having the right kernel and X driver would be enough for the video driver ...

Only 1000 packages to go now ...

Later ...

Well it's still really slow. I tried an older driver release (on windows - hard to find them for fedora) but it wouldn't support the card. On windows the wall-clock of part of my application runs about 2x vs linux: which is pretty significant since much of the time is just waiting around for the video frame to arrive so the speed-up is presumably more than that. Needless to say the desktop is smoother too.I also tried the viola-jones detector from socles. Ouch, this really really struggles - about 100x slower than running on nvidia hardware. I tried a few things that didn't make any noticable difference apart from removing the single rarely-used atomic_inc which made it jump to about 30x faster - but even with that huge increase it was still well behind the GTX 480.

I think probably I will have to try some other possible ideas to deal with this:

- Scale the images so that each sliding scan reads adjacent locations (i.e. coalesced reads), and go back to 1-thread-per-test/cascade.

- Pre-calculate the scaled weights/regions on the cpu so they can be stored in constant memory.

- Cache the region/weight information in LS.

- Unpack the region/weight info into a flat structure so it is read sequentially rather than walking a tree stored in an array.

- ? separate the sum calculations from the weight calculations. By doing less work there might be more locality of reference/chance for any cache to function. This is just another way to try the first point I guess.

- Use atomic counters if available since global atomics are obviously a huge no-no on cayman.

I had also better check it on my HD 5770 which runs the fc14 desktop very snappy and runs OpenCL ok to verify it isn't just all down to a shoddy driver (Hmm, now I think about it, I haven't tried OpenCL on it since 'upgrading' to fc14 from a hacked up ancient gnewsense).

glxgears does start to slow down on the 5770 vs the 6950 as you make the window bigger - so the hardware itself is somewhat faster. The problems must be in the overhead of the os/drivers. No question that ATI aren't doing a great job here but on the other hand, the xorg, fdo, and linux guys seem to change their minds about driver/graphics architecture every 6 months too ...

I was looking forward to playing with some new hardware, but apart from the sluggish GUI and having to `upgrade' the system, most of the application I work on no longer functions as critical routines are returning broken results. Not fun. Some of these are going to turn out to be bugs but i've already found problems with the compiler (e.g. commenting out all of the #pragma unroll directives fixed a bunch of stuff).

Well as the boss said, these things are so cheap it probably isn't worth my time (or his money!) for me trying to fix these issues ...

Later Still ...

Well I seem to have most of the code working again. Apart from the #pragma unroll error, they seem to be my own fault.

First, a bunch of queue synchronisation problems: data being over-written before it was fully processed for example. NVidias libraries are more aggressive about starting work without an explicit clFlush(). And apart from that I just made some mistakes along the way which weren't exposed until now.

And one odd one which took a while to track down: passing the same image as both a read_only image, and a write_only one. I knew this was suss when I did it, but 'it worked' so i left it there: I had it in the back of my mind that this was the sort of thing I should check, but I couldn't remember where I'd done it.

I still have newly added stability issues - the dreaded and meaningless 'error 134': but in the past these have usually been bugs too. Although not always.

So perhaps the drivers aren't so bad after-all; although they are still too slow from linux.

I guess I should've stuck to one of my rules of thumb of late: if you think you're getting the wrong result from the compiler, you just haven't checked your code closely enough yet.

DCT denoising

Ok now the weekend's over, time to calm down and stop ranting ... ;-) Bummer about Australia losing though, apart from some real shockers right from the kick-off they did calm down and start playing fairly well. When they did have a good run - and they had a few - they were let down badly by not enough support at the breakdown. Still, NZ deserved winners ... And channel 9's race-caller sucked the whole way through.I just found this very well put together site about using the discrete cosine transform (DCT) to do threshold de-noising in a manner similar to the wavelet threshold denoising and sharpening I mentioned before.DCT Denoising

Very slick, complete with well formatted mathematics that puts most microsoft-word based papers to shame, GPL3 source and on-line demo!

I downloaded the code and modified it not to add the noise and tried it myself on Lenna:

The results are effectively the same as with the complex DTCWT version for moderate settings - visually even the artefacts it introduces are the same.

In the form provided however it is somewhat more computationally intensive - it's sliding window is offset by single pixels, and the way the C++ is written isn't the most efficient. I wonder how well it would work with a hanning window and 4 pixel offsets. I wonder if it can also sharpen - from a quick search it looks like it can.

Very interesting, and it also works with colour images in smarter ways than just processing each channel separately.

When I get the time I'll look at coding this up for ImageZ and socles,

although I just noticed blogger mucked up something else - looking at images - so the threshold of having to do something about that is ever approaching

(I found the option to disable 'lightbox' mode).Update: Just another advert for Java. It looked simple enough so I coded up a version in Java using an 8x8 DCT and it runs single-threaded over 3x faster than the C++ version, including the JVM startup or over 4x once it's going. Rather than generate all 255 025(!) patches, transform, threshold, inverse, and merge, it fully processes a single patch each time: requiring that much less DCT memory (i.e. rather a lot - over 62MB less). So that's 0.9s vs 3.9s for this 512x512 mono image. Although I can't fathom why my version needs 1/2 the threshold to give a similar result ...Update: See follow-on post where i mention implementing it in OpenCL for socles.Update: I've now added it to ImageZ. DCT8Denoise is the main entry point. I changed it to work with separate colour planes rather than planes stored in a single array, just to make it easier to invoke from ImageZ. It's only single-threaded atm.

Well ...

Just when you thought it couldn't get any worse, channel 9 - who hardly showed any of the world cup to start with - have what sounds like a horse-race caller doing the commentary on the AU/NZ semi-final. He does know the players at least, but doesn't seem to know the rules or that we too can see the same pictures as he is. So much for a bit of atmosphere, i had to turn the sound right down to be able to focus on the game and not this dickhead.

You don't realise how much the commentators make the game until you get a complete fuck-wit like this.

The one bright spot of the channel 9 coverage of the whole world cup - that they didn't provide their own wanker commentators - eclipsed in a moment.

Australia aren't looking like winners here after the first half, but there isn't much surprise there. Given a bit of bad luck and some very poor execution they're lucky they're still in it. NZ have made too many mistakes too.

Goodbye google news

Well, it's been a weekend for disappointment. Damn Wales were unlucky ... I'm actually not sure who I want to win out of New Zealand and Australia today - the kiwis just demand so much respect it's hard to barrack against them; i'll have a few drinks and go for whom-ever is playing the best I think. If they're both on their game it could be a real cracker of a match. But I digress ...

So, again google has decided to muck about with something which pretty much didn't need fixing. Last time they messed with news.google.com.au I wasn't particularly happy but continued to use it fairly regularly as the changes were just cosmetic usability issues but I think these latest changes are going to be too much on-top of a few other reasons i'll detail later.

TBH I can't believe i'm devoting so much time to such a post - it really doesn't mean that much to me on it's own - but in the over-all scheme of things these small (and not so small) issues do mount up. It turned into a bit of a mega-rant at the end and the language deteriorates as it goes ...

First, the existing google news as I see on this laptop ... starting with the top of the page:

And then the middle of the page:

First thing: Yes I (very much) like to use Bitstream Vera Sans as my font for everything: coding, and reading documents. And even then, only 1 specific size works the best (not being able to do this is the single specific reason I wont even bother to try Chrome). So all you designers painstakingly choosing your typefaces and font sizes: you're wasting your time, if one can't read the information it is worthless. Most sites actually work fine with this, although a few have some minor formatting issues (mostly text overrunning the bottom of iframes).

And secondly I do have a crappy 1024x768 IBM laptop screen. Although few laptops have resolutions to match anymore, plenty of phones, netbooks and iLandfill slabs don't even get this far.

Ok, now on to the layout. There is still a big wasted load of space on the left that they added in the last major layout update, but basically most of the page is used for information content. Each story has a few alternative links from common (and sometimes not so common) news sources, an email link, and at most a single picture. Mouse-over's (at least today) are restricted to highlighting the link which is about the most i'd like any browser to do with them.

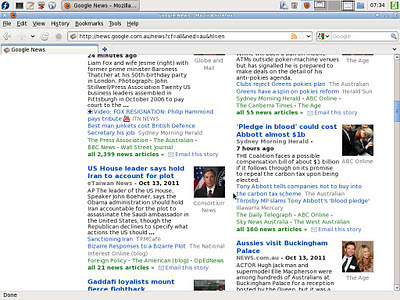

Now, to my suprise, I was greeted with the following page when I opened google news on my other laptop this morning:

Hmm, something doesn't look right. First, everything is in one column. A huge chunk of wasted space on both the left and the right now. And what's more, the real killer feature of google news - at a glance being able to see the 'feel' of the media reporting of the news story is conspicuously absent. There is only a single link to a single news source.

Actually I couldn't work out how to find anything more than that: I normally browse with Javascript disabled on that machine - because I don't like my lap burning, nor fæcebook to know where my mouse is whilst i'm reading a news article on an unrelated site - and all you end up with is a single link.

Enabling javascript and reloading, and I discovered a huge pile of annoying mouse-over shit (AMOS).

So, now you actually have to click an ugly button to bring this stuff up. Hoorah, now we have popup-pox infesting web pages too, just loverly[sic].

And on-top of that it's now somewhat more difficult to decipher - it is trying to add extra information to the other links beyond their titles. Do I really care that it is an opinion link? Or why the special notoriety of articles "From the United States [of America]"? Is their opinion somehow more important?

And apart from that, there's rubbish like a fæcebook, twatter, and plus-one button in addition to the email link, and 3 video links in addition to the picture. Clutter.

So ... I did a search and apparently the cog button is the settings icon these days. Who'd knew ... (actually I thought it was some logo, not a cog for that matter: it looks more to me like a high-contrast themed variation of the xfce main menu button) Of course, none of the buttons function if you have Javascript turned off ...

So to the rather bare settings. 1 or 2 columns, and auto-refresh. Færy-nuff, lets try ...

Oh hang on. That looks broken. Why would anyone possibly want to read the site that way? Not to mention more AMOS to 'enhance the experience', and the same big blank section on the right.

At least the killer-feature reporting-at-a-glance is back, but there's just no way anyone would labour through such a horrible interface for that.

Oddly enough ... if you disable javascript ...

You get the right-hand side-bar back, and thankfully the AMOS disappears as well.

Well almost ...

For some reason the top of the page has this non-functional news selection slider thing stuck to it.

Thoughts

I can only think that google has a particular idea in mind here: if you're not using a 24" widescreen monitor, then you must be using a phone or some iLandfill toy. Although that doesn't completely make sense since the new site would be even more useless on phones so they must have 2 separate stylesheets/designs for each one anyway. So why fuck it up so royally?

More and more of the web requires javascript - whilst usually using it for pointless crap like implementing buttons in a non-recognisable os-agnostic way (those damn designers again, thinking they can redefine 30 years of progress in human-computer interaction on every page). I find this whole idea of javascript everywhere very questionable security wise - a web page can load 3rd party application which can then send information (e.g. where your mouse is) to any other 4th party without your knowledge. And hence more and more web pages are being turned into 'crapplications'. They're slower, uglier (and certainly not 'theme aware'), and more clumsy than local applications, but they're much heavier cpu and data wise compared to remote ones. It also closes off the avenues for using alternate browsers: having to have a very high performnace rendering engine and javascript vm is a massive barrier to entry (e.g. even firefox 3.6 is ruled out of many sites now).

Welcome to the 3rd age of thick-client computing. All the local computing power required to run local applications, combined with the speed, grace, availability and security of remote ones. Oh boy! Hold me back!

No news is good news?

On a personal note i've been trying to avoid reading the news too much anyway and google news itself. Its always the same old shit. It's mostly depressing, or at best it's just click-bait to rile you up.And google news's aggregation algorithms are pretty much like watching TV based on the ratings: not the sort of experience I'm really after. For example, apparently `funniest home videos' the most popular show in Australia? Do I really want the bogans who watch channel 9 deciding what news makes the front page (depressingly the truth is of course that yes, they already do). With such an ignorant population, no wonder 'no more boats' is a (an almost) winning election slogan around these parts, or that the global warming denialists get so much airtime. A timely reminder - and exactly what I thought the first time I saw the advert with the sound not muted (which is how I watch advertising if i'm watching 'live' tv, although my tv mute button wore out ...).

Still, I do like to check at least once every couple of days - least i become one of the ignorant masses if nothing else. Or to fill a spot to give my brain a rest or whilst waiting for a routine to run ... Unfortunately now I use Java there's no more waiting around for compilation - the 50KLOC bit of code I work on compiles and launches the application from scratch in about 1/2 a second (ant doesn't include resources properly in the jar without a clean rebuild - and building jars is the single terribly weak fucking reason to justify it's utterly shit and astronomically painful fucked up existence - so I have to do it every time when working on opencl code. Fucking adjective!).

I guess I can use fairfax for the little Australian news i'm after (democratic politics died for me when Howard went to war, and without that what is the point of listening to those arseholes - and without the politics there's fuck-all left), The Guardian for Europe and summaries or links in a few blogs I visit will do me from now. I gave up on The ABC months ago - which should really now just be called `The Opposition Says Sydney-Siders Gazette'. Even SBS TV news has been shit for ages, since they cut their budget it's little more than a patchwork of cheap stories from other services (many barely trying to hide themselves from the happy-story pro-war/pro-usa propaganda they are, like some of the BBC stuff from iraq/afghanistan).

Barely any of the services do any local news at all. Most of it is broadcast/published straight out of Sydney or Melbourne. Not that much of import happens around here, but sometimes you do need to know about local stuff.

One thing google news showed me (until now) is just how much of the news is just the exact same story repeated ad nauseam, so at least I know I wont be 'missing out' on anything by not using it.

Copyright (C) 2019 Michael Zucchi, All Rights Reserved.

Powered by gcc & me!