About Me

Michael Zucchi

B.E. (Comp. Sys. Eng.)

also known as Zed

to his mates & enemies!

< notzed at gmail >

< fosstodon.org/@notzed >

Is VR really a good idea?

It looks like the technology is just about there to create affordable and usable 'virtual reality' hardware for the general public: but as with many other technological advances one has to ask whether the technology is ahead of society's ability to cope.

TV is already a pretty good conversation killer and mobile phones have become little cones of isolation even when "socialising" with friends or family, so how will it play out if you're whole field of view (and hearing?) is encased in a helmet?

I can see a lot of agro from brothers and sisters fighting over the one head-mounted display that these things will only be able to support for the time being. And some angry mums when little Johnny or dear little Alice wont come out of his or her bedroom for dinner because he can't even hear the calls (and god knows what they're up to in there). And some pretty boring get togethers with mates around the TV getting sea-sick looking at a view from the one player's eyes.

Another more disturbing factor that will play into it is the continual fine tuning of the skinner box trade - games which are pretty much just poker machines / gambling devices for extracting money from the vulnerable. Since those are making such a fuck-ton of money at the moment they are only going to get worse. If people can already get lost in a tiny screen on a phone how will they cope when they're shut-off completely from the outside world? The scope for manipulation of vulnerable or susceptible people is enourmous. It's easy to blame people as being weak-minded but it's not entirely their fault: they are being manipulated without even knowing it, but intentionally by maniplators who know what they're doing.

Or propaganda / religious / idealogical indoctrination, both scurges of right-thinking citizens everywhere. Cut off from immediate self-correcting factors like someone telling you what a dickhead you are.

As an aside I wonder when hollywood became such an obvious propaganda front for the neocon/zionist agenda? One of the recent transformers movies was on the other night on TV and I couldn't get over just how blatantly propagandist it was at every level: an [unemployed] 'nerd' who saves the day, with a super-model girlfriend, with happy middle-class parents, with nothing but leisure to keep them occupied, government secret organisations [being a good thing, by] protecting the [whole] world from bad stuff [that usually happens in the middle east], to advanced alien race only wanting to deal with the USA [who are obviuosly the good guys], even to some [crazy] conspiracy nut not only being believed by everyone but also being incredibly wealthy. I guess we had some of that shit in the 80s and 90s but at least we had some stuff to counter it too (and a bit of fucking humour) and now it's just so overt it's bordering on sick. Although it's been an undercurrent for some time I suppose it was around the turn of the century it really took off so brazenly by taking advantage of public maleability at the time. And the really sick part is they get upset when people don't want to pay to be advertised at and brainwashed (or maybe the sick part is people want to go to the effort to get it in the first place). But I digress ...

Back to the VR stuff: potential health issues. Spending many hours staring at a screen with a fixed focal distance can't be good for your eyes. Modern lifestyles are already sedentry enough and the lack of external vision enforces this even further as you don't have a choice but to sit while doing it unless you live in a rubber room. And if socially retarded people (like me) can already get caught up in reading books or hacking code well into the next morning what's going to happen when you can't tell if it is night or even know where you are? I wonder how long until someone dies using one? Or loses their job/fails at school because they'd rather spend time outside of IRL, because lets face it, IRL pretty much sucks for most people at least some of the time. Although it's not like both of these don't already happen with existing technology.

And imagine not being able to skip adverts or mute them - or even look away from them? That's nightmare material.

There are some potentially interesting non-game uses that spring to mind which seem to contradict some of these points such as remote communication or stuff for mobility impaired (whether through age or disablement). And others such as training. But most of these will be short-term or irregular.

But overall i'm just not sure on the whole idea for entertainment itself. It could be totally bloody awesome or it could be the beggining of the end for western civilisation (civilisations never last forever ...). Ok probably not the latter but there are big issues beyond the technology capability itself and those explored by some laughably fantastical stories by Neal Stephenson.

It'll certainly be interesting for a while anyway - a new area of technlogy to explore. Don't get me wrong there are some really exciting possibilities for games and other uses that i'm looking forward to trying out one day. But in the end there may need to be mandatory breaks, minimum age restrictions, ambient input (e.g. external cameras or see-through screens/windows) or other tweaks just to protect people from themselves.

I guess we'll see in about a decade, assuming the experiments in the next few years become commercially successful.

The weirdness of mass-market economics, m$l vs psn.

So this is about the playstation network on PS3 vs the microsoft live(?) thing on xbox, xbox 360 for on-line games and other on-line services.

On paper it seems like a no-brainer: zero-cost on PSN for multi-player games and all of the internet based services the XMB provides vs a yearly subscription for microsoft multi-player games and all of the internet based services they provide (even those which already require a subscription?). Oh and the one you pay for is the one full of

fucking

adverti$ing???

M$ are totally nuts right - surely everyone would just go with PSN?

Funny that.

Except, once people start paying for stuff they just assume it's "better" (and i'm sure a bit of viral/illegal marketing helped that idea take hold as well) and will argue as such to their death. Apart from that once you pay for something the whole "you may as well use it" factor comes in: once paid for people will naturally want to use the paid for service rather than the free one, and network effects start to kick in and suddenly everyone is using the paid service for all multi-player games, despite their best interests taking second stage.

So it's kind of sad ... that the only way Sony could possibly counter this is by just charging for their service too - as they have with the PS4. Very few people will want to pay for both (and to be honest, you'd have to be a bit thick in the head if you did) and so it forces everyone to make a choice. This breaks up the advantage of the network effects and although some will stick to what they know and are already paying, the magic factor for one vendor is instantly evapourated. By being that insignificant bit cheaper and leaving some services free anyway (not to mention the games) Sony are instead creating a positive network-effect in their favour and thus under-cutting years of effort by M$. (Apart from in the case of the PS4 vs xbone actually creating a games machine and not a gimped water-cooler/us-only-tv/football thing).

"master-stroke"?

Still, it's kinda dumb that the only way to fight a paid service is to charge as well and free just can't compete. Somewhat fucked, more than just dumb.

On a side note I did start with PS+ a few months ago myself but it was just for the games - I have zero interest in online mutli-player and don't have a PS4 anyway (SotC+Ico-HD pushed me over, although TBH I couldn't get into the former and forgot how to play the latter - even though i've previously finished it on a borrowed copy). I stopped buying games about 18-24 months ago so there's been a constant stream of good games that I never played that I thought I might one day. Many are ones I wont ever like or bother with but there's enough to keep me going for a few years already at the rate I play (and some I never even saw in the shops here at the time and certainly cannot be found there now).

Paying for this type of subscription service pretty much goes against every free software (and otherwise) bone in my body ... but for me it's not even an hours labour and less than 2 cartons of piss and in Australia it's substantially less than a single full-priced game so from a personal perspective it's pretty much insignificant. When my utility bills and rates are hitting around $8K/PA these days it somewhat changes how you think of anything under a grand (fortunately I have no mortgage, ooo-yeah!). Although with a small garden, good freezer, and a bit of smart shopping decent food is still cheap as shit here in Australia so those high costs aren't as bad as they sound - although it was a bit of a one-off, i'm currently going through a second vac-pac whole rump that only cost about $4/kg (~$2/lb). It's no $30/kg steak but it's great for curries and stir-fry (and the cat) and i don't mind having to use my teeth occasionally anyway.

The main (rather big) downside is that I can't share the games with friends.

I guess i'll see if they end up adding adverts. That is my new bug-bear at the moment: pretty much all investment today is based on something as stupid and non-producing as advertising dollars. I can deal with a certain amount but it's so overbearing now that it makes viewing the web or watching TV, well, unbearable. Google, i'm looking at you.

Voxel heightmap, dla

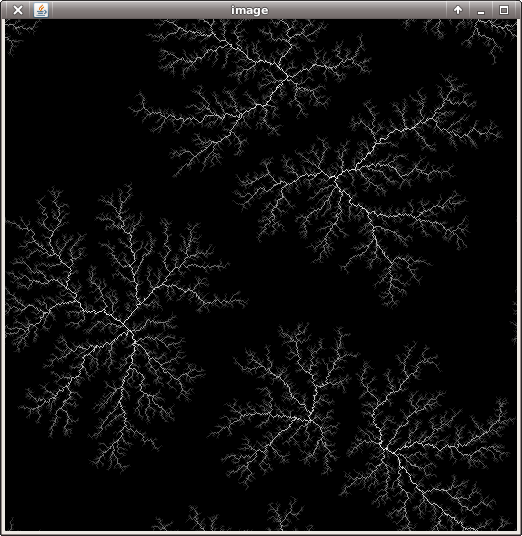

I've been mucking about a bit with the voxel stuff some more, trying some terrain generation. I first cooked up something from memory but it wasn't correct, tried perlin noise but wasn't really happy with it and then I came across this post about terrain generation using Diffusion Limited Aggregation (DLA) to generate mountain ranges.

Partial solution.

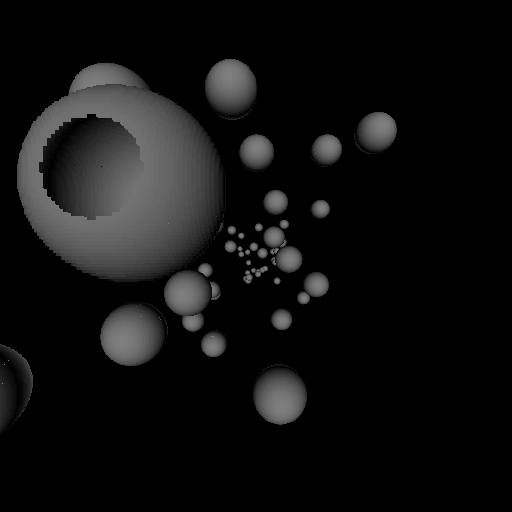

And the final result of the basic DLA. This version is cyclic.

The basic algorithm is very simple:

Node[] nodes; // grid of nodes

int[] map; // grid of points

randomly seed Ns locations in nodes

total = Ns;

// until all locations are visited

while total < width*height

x = random(width);

y = random(height);

if (nodes[x,y] != null)

continue;

fi

// randomly walk until something is hit

while !hit

// save location we came from

lx = x;

ly = y;

// walk to next location

(x,y) = randomly move by 1 cell in a compass direction

if (cyclic)

x = x & (width-1);

y = y & (height-1);

else if (out of range)

break;

fi

// check for attachment point

n = nodes[x,y]

if (n != null)

hit = true;

total += 1;

n = new node(n, lx, ly);

nodes[lx,ly] = n;

n.visit(map);

fi

wend

wend

Where:

Node {

Node parent;

int x, y;

void visit(int[] map) {

map[x,y] += 1;

if (parent != null)

parent.visit(map);

}

}

I'm then showing log(map).

It certainly has some nice 'erosion' like shapes, and can also make some nice lightning. I also experimented with something based on physical erosion but that didn't really pan out.

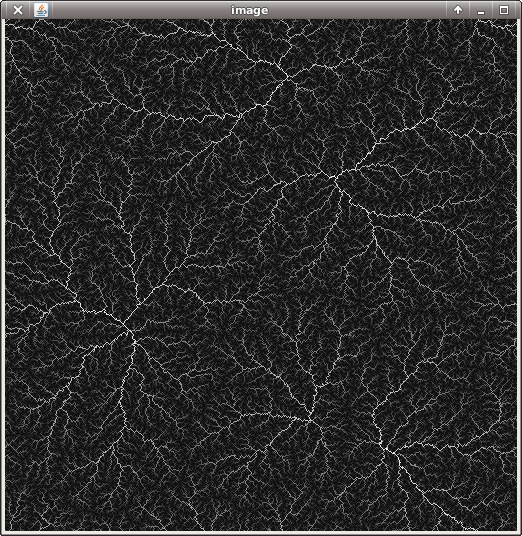

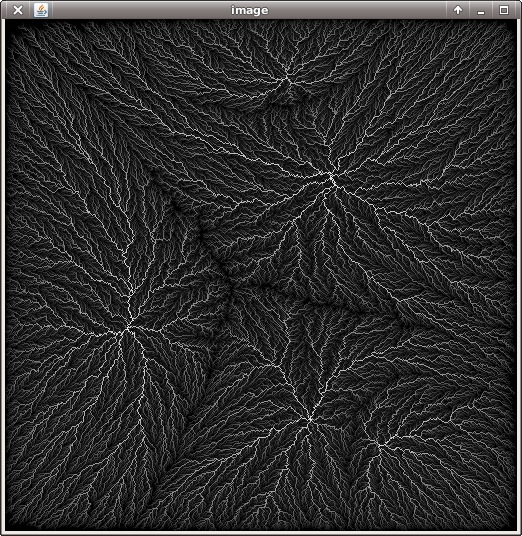

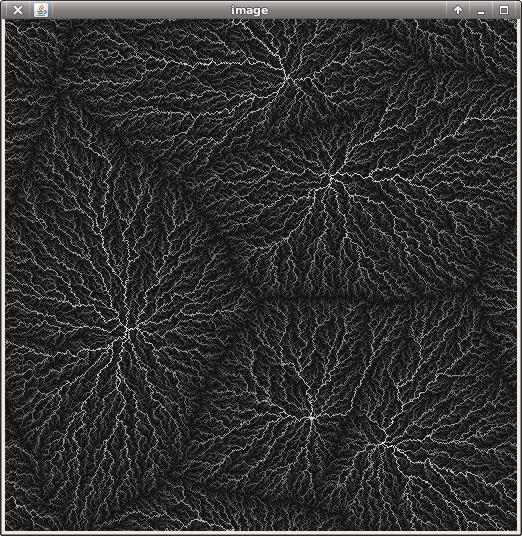

This algorithm grows the seed points like a crystal and because the search space is quite big is rather slow to get going on larger images. Although it really races to the end (the first image above was about 15 seconds in, the second was 2 seconds later). I tried a few variations to speed this up:

- Using random line segments

-

This is much much faster but the result has fewer "fiddly bits" and is more strung out.

- Randomly choosing an existing node from which to grow a new point

-

This is faster at the start but slows down as it starts to randomly choose nodes which have no where to go. It also produces a more regular shape more akin to mould growth; which is not really very mountainous.

It might be possible to play with how it chooses the growth points with this one, both to speed it up and tune the shapes it generates. I've experimented a little bit but didn't have much luck so far.

Unfortnately the pixel-scale of everything is a bit too detailed so I need a way to scale it up without losing the intricacies. I haven't tried the accumulation of multiple blur radii from the link above yet. That should be able to upscale at the same time too.

But yeah, right now it just isn't grabbing me enough to really get into it - but then again nothing is atm. Blah.

Voxels

Just playing around with a 3d implementation of the ray casting in the previous post.

Fly-through video here. All spheres have a radius of 30 voxels.

I'm just using the abs(normal.z) as the pixel value and a very simple projection. This is a double version and there are some rounding/stepping errors present. I changed the maths slightly so that the stepping is parametric rather than on x/y/z which simplifies the code somewhat.

It's pretty slow on my laptop, about 1 frame/s, although it doesn't really matter how deep the volume is - this one is 4096x512x512. Not that it's optimised in any way mind you.

At some point I do intend to see how it would run on epiphany ... but right now i'm too lazy! The main bottleneck will be the tree traversal for each ray although I think in practice quite a lot of that can be cached which would be a big speed-up. Other than that it could be a good fit for the epiphany given how small the code is and that only a single data strucutre needs to be traversed.

TBH i'm not sure if it's ever going to be practical for anything because of the memory requirements even if it can be made to run fast enough. *shrug*

Bresenham(ish) line, skipping, raycasting.

Haven't been up to much lately - just trying to have some holidays. I have been tentatively poking at some ideas for raycasting. Not that I have any particular idea in mind but it might be something to try on the parallella.

Part of that is to step through the cells looking for ray hits, which is where bresenham's line drawing algorithm comes in. I'm working toward a heirarchical data structure with wider branching than a typical quad/oct tree and so because of that I need to be able to step in arbitrary amounts and not just per-cell. Fairly cheaply.

I don't really like the description on wikipedia and although there are other explanations I ended up deriving the maths myself which allows me to handle arbitrary steps. Unfortunately this does need a division but I can't see how that can be avoided in the general case.

If one takes a simple expression for a line:

y = mx + c

m = dy / dx

= (y1 - y0) / (x1 - x0)

And implements it directly using real arithmetic assuming x increments by a fixed amount:

dx = x1-x0;

dy = y1-y0;

for (x=0; x<dx; x+=step) {

x = x0 + x;

y = round((real)x * dy / dx) + y0;

plot(x, y);

}

Since it steps incrementally the multiply/division can be replaced by addition:

incy = (real)step * dy / dx;

ry = 0;

for (x=0; x<dx; x+=step) {

x = x0 + x;

y = round(ry) + y0;

plot(x, y);

ry += incy;

}

But ... floating point aren't 'real' numbers they are just approximations, so this leads to rounding errors not to mention the rounding and type conversions needed.

Converting the equation to using rational numbers solves this problem.

a = dy * step / dx; // whole part of dy / dx

b = dy * step - a * dx; // remainder

ry = 0; // whole part of y

fy = 0; // fractional part of y

for (x=0; x<dx; x+=step) {

x = x0 + x;

y = ry + y0;

plot(x, y);

ry += a; // increment by whole part

fy += b; // tally remainder

if (fy > dx) { // is it a whole fraction yet?

fy -= dx; // carry it over to the whole part

ry += 1;

}

}

Where y = ry + fy / dx (whole part plus fractional part).

For rendering into the centre of pixels one shifts everything by dx/2 but this cannot be accurately represented using integers. So instead the upper test on the fractional overflow is shifted by dx/2 by multiplying both sides by 2 which leads to:;

if (fy * 2 > dx) {

fy -= dx;

ry += 1;

}

This suits my requirements because step can be changed at any point during the algorithm (together with a newly calculated a and b) to step over any arbitrary number of locations. It only needs one division per step size.

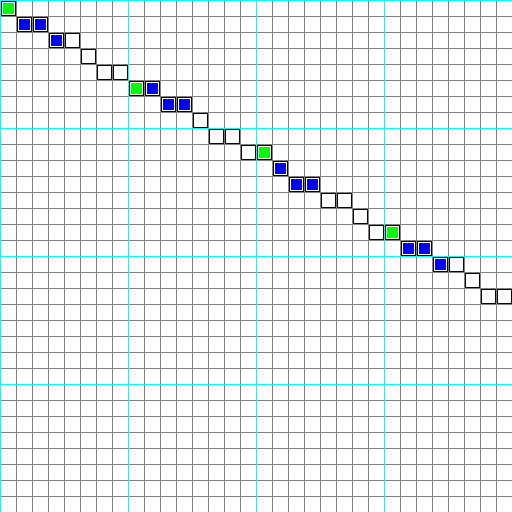

The black outline is calculated using floating point/rounded, and the filled-colour dots are calculated using the final algorithm. The green are calculated by stepping 8 pixels at a time and the blue are calculated by starting at each green location with the same (y = ry + fy/dx) and running the equation with single stepping values for 3 pixels.

(i did this kinda shit on amiga coding 20 years ago; it's just been so long and outside of what i normally hack on that i always need to do a refresher when I revisit things like this).

Unfortunately for raycasting things are a bit more involved so I will have to see whether I can be bothered getting any further than this.

Update: So yeah after all that I realised it isn't much use for raycasting anyway since you start with floats from the view transform - converting this to integer coordinates will already be an approximation. And since hardware float multiply is just as fast as float add may as well just use the first equation with floats and pre-calculate dy/dx so any rounding errors will be consistent and not accumulate.

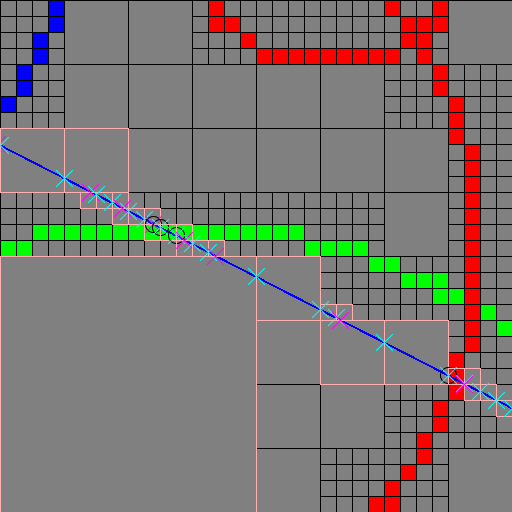

So here's a plot of a 2d ray through a 16-way (4x4) tree. Cyan X is when it hits a vertical cell wall, purple X is when it hits a horizontal cell wall, and black circle is when it hits a non-transparent leaf cell. The black boxes are the nodes and leaf elements in the tree, and the pink boxes are those which are touched by the ray. I just filled the tree with some random circles.

The code turned out to be pretty simple and consists of just two steps:

First the location is converted to an integer (using truncation) and that is used to lookup into the tree. Because each level of the tree is 4x4, 2 bits are used for both x and y at each level so the lookup at each level is a simple bit shift and mask. If the given cell is in a non-transparent leaf node it is a hit.

Then it calculates the amount it has to step in X to get beyond the current cell bounds: this will simply be MIN(cell.x + cell.width - loc.x, (cell.y + cell.height - loc.y) * slopex). The cell size is calculated implicitly based on the node depth.

The 2d case isn't all that interesting to me but it's easier to visualise/test.

Hmm, on the other hand this still has some pesky edge cases and rounding issues to deal with so perhaps an integer approach is still useful.

GT6 is the best yet (so far)

I bought a few games recently but have been too lazy to even start them up, but one of them was gran turismo 6 that I just had a go with tonight.

So far for me it seems like the best gran turismo yet - and i haven't really liked any since 3 (my first one). GT4 was just confusing, and GT5 just wasn't very fun - that's not even counting the weird menus and loading times. I think with that one polyphony might've forgotten they were making a game for the general public and not just themselves.

The graphics aren't really any different to 5 (not a bad thing) but I really don't understand why polyphony keep insisting on upping the resolution - the hardware just can't do 1080 pees at that frame rate and the tearing they resort to is simply unforgivable (haven't heard of triple buffering??). I force my display to 720p which makes it go away for the most part and the lack of tearing more than makes up for any loss of resolution which is barely noticable from the couch anyway. The blocky/shimmering dynamic shadows are also a bit more than the hardware can deal with but the dynamic time and weather it enables makes it an acceptable trade-off. I only drive using the bonnet-cam so I couldn't care less about the internal car modelling and TBH GT3's cars looked good enough from the arse-end which is the only thing you can see when racing. I don't really mind GT's sound engine or even music for the most part either.

The menus are mostly better apart from the tuning screen with it's expanding tabs although most of the other menus are a lot better. Much quicker too and global short-cuts let you change or re-tune your car anywhere rather than having that shithouse partitioned garage from GT5 (where standard cars are segregated). A weird niggle is that it doesn't remember some of the driving options when you change cars. Collisions are still modelled on a plastic canoe but I suppose that's better than just ending the race in a shower of broken glass and burning rubber that a true simulation would demand, or god-forbid adding some "sands of time" to rewind.

The introduction to the game (first few races, not the movie) is more gentle and the annoying license tests are a lot easier and fewer in number. Having the racing line from your friends pop up is also a nice touch - "asynchonous online" could work very well for this game and I think could well be the killer-app for Drive Club if it can reach a critical mass. The economy seems to be more newbie friendly too - GT5 had me re-running shit-box races over and over just to be able to afford a couple of upgrades to beat the next shit-box race. So far i've only re-run races to get a better time, and for the first few races it only takes one or two tries and by then I had enough credits to upgrade a bonus car to finish all of the novice class races easily. A couple of the current seasonal events give out nice cars for little effort too which is more than enough to get established but even the shit-boxes have a bit more get up and go which makes the early races less of a chore. Apparently it has micro-transactions although I haven't seen where.

I guess the most important part - the driving - just feels way more "fun". I think the tyres just feel a bit grippier and don't overheat as much or for as long. It seems more possible to recover from a loss of traction rather than just turning into an uncontrollable slide/spin. And you can finally roll cars - no more weird elastic which keeps the car from flipping over. I think they toned down the slipperiness of the non-road surfaces for the beginner cars and maybe they tweaked the learning curve a bit to make these more enjoyable to drive. I know it's supposed to be a simulator but it definitely is a game first.

Despite the number it feels like a more rounded game than any of the recent ones and would be the best entry point to the series i've tried. It doesn't take the 'simulator' sub-title so seriously that it ruins the game part of the game.

And yeah ... Bathurst ... at night.

Update: Been putting in a few hours every few nights lately. Yeah the economy is way better than GT5, I somehow ended up with 1Mcr from some 90 second time trial in the seasonal thing. So much less (actually none) grinding needed compared to GT5 - thank fuck for that.

Some of the tracks suck a bit, but that's just the track. e.g. silverstone or the indy track; too flat and the actual track doesn't always follow the marked lines on the road so you have to follow the map otherwise you run off (pain on a time trial if you're not familiar with the track since a run-off == disqualify). And the tracks being so sucky put you off wanting to learn them. OTOH some of the tracks are pretty good. I did a lot of grinding in GT5 on Rome with the 4x4 seasonal truck race and so that's become a bit of a favourite, I always liked Trail Mountain (that straight up the tunnel), and there's really nothing at all like Bathurst at dawn or dusk. During the race there's some weird dithering on trees and on replays you notice how they are low-depth sprites but given they fit the whole forest on there it's pretty forgivable. At least the gum trees actually look like gum trees too - which is pretty rare in racing games given how distinctly different they appear to other trees and the milky way at night is pretty impressive from the southern hemisphere. It's a pity they don't have the original layout before they added the dog-leg at the bottom of conrod straight, but I guess 'licensing' wouldn't allow that. The Matahorn is also quite a bit of fun in a light nimble car although it's pretty easy to over-cook it on the down-hill bits.

One annoying thing is that it only runs in NTSC timing - no 50hz output on HDMI. This is pretty sucky because those extra 4ms would help with the screen tearing a great deal and I just don't see why we need to be subjected to some legacy timing from an ancient american video standard. I know i'm probably going against the grain from 'gamers' who think otherwise but they are just ignorant and ill-informed.

Hopefully the seasonal events start including races - time trials are pretty boring and it's starts to become 'not a game' when one simple mistake requires a whole lap to fix. Although at least for the car prizes you only need to do a single clean lap - regardless of the time - to win the cars. If it was the first GT ever I could understand all the time trails to some extent - it's a bit of a training exercise to be able to drive properly - but it's no.6 (+ all the prethings) in the series.

I was pretty surprised to see how Forza on the xbone uses PS2 era rendering techniques - fixed shadows (actually even worse, all shadows project directly down from the videos i've seen), fixed time of day, no weather, really basic textures and 2D crowds (oh dear). Because the views in car games are quite predictable they are always able to push better-than-average graphics at high frame-rates and the car models are getting so detailed they seem to be at a point of diminishing returns. But I think there's an awful lot left for future games; GT already has weather and dynamic shadows, driveclub improves on the shadows, adds 3D trees and volumetric clouds. Future games could add procedurally created seasons or even dynamically created landscapes that grow and age. Better water modelling (puddles, flowing water, and not just 'wet'). Not to mention VR stuff. I think the car models are good enough (more than good enough TBH), but there's still enough possibilities that no amount of FLOPS will ever exhaust them.

Original version PS3, dust, cost.

A few weeks ago I was bored one afternoon and thought my ps3 was making a bit too much fan noise so i opened it up to clean it up.

Boy, what an involved process. So many screws. So many ribbon connectors. Ended up being a whole afternoon operation.

I followed video of a guy taking one apart on youtube (can't find out which one) but I did the same mistake he did before I got to that part and cracked the ribbon cable connector for the blu-ray drive - it flips up. It still works so I guess it makes enough of a connection but it may be on borrowed time. I also temporarily lost the memory-card cover spring although I found it the next day (but didn't want to open it all up again).

I got it down to the point of taking the fan out to clean it - it has some very stuck-on dust which I wiped off, but apart from that most of the dust was outside of the main cooling thoroughfare and so was pretty irrelevent. After getting it all back together it didn't really make any difference. I had mine sitting on a frame I made up because until i blew up my amp it was sitting above that and both needed the ventilation - that probably reduced the dust getting into the insides.

In hindsight I should've just left it alone!

I only got about 2/3 the way through taking it apart too.

However I guess it was interesting to see the thing for myself. That fan motor is a total monster and the heatsink is gigantic - most of the whole base area. It must've cost a fortune to make and to assemble. With all those separate parts and shielding pieces to put together the initial production must have also been hard to test and very failure prone increasing costs.

Looking at the video tear-down and pictures of the PS4 motherboard the thing that immediately struck me is how simple it is: i'm not a hardware engineer but I can imagine that simple means cheaper to make. I wouldn't be surprised if the PS4 is already cheaper to make than the even the current PS3 on a per-unit basis - and if not it is something that will become much cheaper much faster. It's probably a lot cheaper to make than the xbone too, and again something that will become cheaper at a faster rate. Dunno where all those passive components went.

resampling, again

Been having a little re-visit of resampling ... again. By tweaking the parameters of the data extraction code for the eye and face detectors i've come up with a ratio which lets me utilise multiple classifiers at different scales to increase performance and accuracy. I can run a face detection at one scale then check for eyes at 2x the scale, or simply check / improve accuracy with a 2x face classifier. This is a little trickier than it sounds because you can't just take 1/4 of the face and treat it as an eye - the choice of image normalisation for training has a big impact on performance (i.e. how big it is and where it sits relative to the bounding box); I have some numbers which look like they should work but I haven't tried them yet.

With all these powers of two I think i've come up with a simple way to create all the scales necessary for multi-scale detection; scale the input image a small number of times between [0.5, 1.0] so that the scale adjustment is linear. Then create all other scales using simple 2x2 averaging.

This produces good results quickly, and gives me all the octave-pairs I want to any scale; so I don't need to create any special scales for different classifiers.

The tricky bit is coming up with the initial scalers. Cubic resampling would probably work ok because of the limited range of the scale but I wanted to try to do a bit better. I came up with 3 intermediate scales above 0.5 and below 1.0 and spaced evenly on a logarithmic scale and then approximated them with single-digit ratios which can be implemented directly using upsample/filter/downsample filters. Even with very simple ratios they are quite close to the targets - within 0.7%. I then used octave to create a 5-tap filter for each phase of the upscaling and worked out (again) how to write a polyphase filter using it all.

(too lazy for images today)

This gives 4 scales including the original, and from there all the smaller scales are created by scaling each corresponding image by 1/2 in each dimension.

scale approx ratio approx value

1.0 - - _

0.840896 5/6 0.83333

0.707107 5/7 0.71429

0.594604 3/5 0.60000

Actually because of the way the algorithm works having single digit ratios isn't crticial - it just reduces the size of the filter banks needed. But even as a lower limit to size and upper limit to error, these ratios should be good enough for a practical implementation.

A full upfirdn implementation uses division but that can be changed to a single branch/conditional code because of the limited range of scales i.e. simple to put on epiphany. In a more general case it could just use fixed-point arithmetic and one multiplication (for the modulo), which would have enough accuracy for video image scaling.

This is a simple upfirdn filter implementation for this problem. Basically just for my own reference again.

// scale ratio is u / d (up / down)

u = 5;

d = 6;

// filter paramters: taps per phase

kn = 5;

// filter coefficients: arranged per-phase, pre-reversed, pre-normalised

float kern[u * kn];

// source x location

sx = 0;

// filter phase

p = 0;

// resample one row

for (dx=0; dx<dwidth; dx++) {

// convolve with filter for this phase

v = 0;

for (i=0; i<kn; i++)

v += src[clamp(i + sx - kn/2, 0, dwidth-1)] * kern[i + p * kn];

dst[dx] = v;

// increment src location by scale ratio using incremental star-slash/mod

p += d;

sx += (p >= u * 2) ? 2 : 1;

p -= (p >= u * 2) ? u * 2 : u;

// or general case using integer division:

// sx += p / u;

// p %= u;

}

See also: https://code.google.com/p/upfirdn/.

Filters can be created using octave via fir1(), e.g. I used fir1(u * kn - 1, 1/d). This creates a FIR filter which can be broken into 'u' phases, each 'kn' taps long.

This stuff would fit with the scaling thing I was working on for epiphany and allow for high quality one-pass scaling, although i haven't tried putting it in yet. I've been a bit distracted with other stuff lately. It would also work with the NEON code I described in an earlier post for horizontal SIMD resampling and of course for vertical it just happens.

AFAIK this is pretty much the type of algorithm that is in all hardware video scalers e.g. xbone/ps4/mobile phones/tablets etc. They might have some limitations on the ratios or the number of taps but the filter coefficients will be fully programmable. So basically all that talk of the xbone having some magic 'advanced' scaler was simply utter bullsnot. It also makes m$'s choice of some scaling parameters that cause severe over-sharpening all the more baffling. The above filter can also be broken in the same way: but it's something you always try to minimise, not enhance.

The algorithm above can create scalers of arbitrarly good quality - the scaler can never add any more signal than is originally present so a large zoom will become blurry. But a good quality scaler shouldn't add signal that wasn't there to start with or lose any signal that was. The xbone seems to be doing both but that's simply a poor choice of numbers and not due to the hardware.

Having said that, there are other more advanced techniques for resampling to higher resolutions that can achieve super-resolution such as those based on statistical inference, but they are not practical to fit on the small bit of silicon available on a current gpu even if they ran fast enough.

Looks like it wont reach 45 today after some clouds rolled in, but it's still a bit hot to be doing much of anything ... like hacking code or writing blogs.

Copyright (C) 2019 Michael Zucchi, All Rights Reserved.

Powered by gcc & me!