About Me

Michael Zucchi

B.E. (Comp. Sys. Eng.)

also known as Zed

to his mates & enemies!

< notzed at gmail >

< fosstodon.org/@notzed >

then again ...

After the post yesterday I had a bit of a play around with the ideas. There are a couple of details I missed.

Firstly the current rasteriser implicitly maintains a per-primitive index of live pixels for the fragment processor. If I group them all together indexed (implicitly) by the column location then I have to somehow re-group the fragments afterwards so they can all be processed by the same inner loop to amortise the setup costs. After a couple of ideas I think this needs to be implemented by sorting the fragments by shader, then primitive, then X location. Because I want to leave as much processing time as possible for complex fragment shaders I was thinking of putting this onto the REZ cores; as they currently don't have a lot of work to do. This may be tunable depending on the shader vs geometry complexity.

Secondly if blending is not enabled/required then the primitives can be sorted by Z before they are sent to the epiphany; and this implicitly reduces most of the fragment processing to single pixels as well (depending on geometry) due to culling via the zbuffer test. i.e. all the work to split the fragment shaders from the rasterisers might not be much of a pay-off, particularly if it means losing 'free' alpha blending.

I did some testing using more stars (24x24x24) and found that proper z-order (front to back) makes a difference, but it's only something like 50%; but this is with a trivial fragment shader which isn't terribly representative.

Since time is not money here I'll give it a go anyway and see how it ends up. Now I write it down, restoring the primitive index by sorting would mean the same fragment processor could also support blending by just changing how and when the rasteriser outputs fragments; so I might be able to get the best of both.

I might also try changing the way the primitives are loaded in the mk i design: using (and/or dedicating) core 0,0 to load and distribute each band of primitives to the rendering engines to achieve (up to) a 16x bandwidth reduction of external reads should more than outweigh any wasted flops. I will also experiment with splitting the output into tiles instead of whole rows - the pathalogical case of a primitive taking up the whole row should be rare and if core 0,0 is handling the primitive index anyway i can add extra fidelity to the index without needing more memory to store it. I originally did rows because of the better/easier dma output and to reduce redundant setup costs and address calculations for the rasteriser, but its pretty much a wash on that front between the two approaches and 2D tiles might be a better fit.

Update: Had more of a poke today working on the setup and communications. I decided to go with tiles for the rendering off the bat because it allows more flexibility with memory: if i have a whole row in each core it forces a potentially excessively large fixed minimum size for various buffers throughout the pipeline - or an unreasonably narrow rendering resolution. But if I split it into tiles then the height can be adjusted if I need more memory. My first attempt is with tiles of 64x8 pixels which allows for a rendering width of 768 pixels if 12 cores are used for fragment shaders and only requires the same modest 8K for a 4-channel floating point colour buffer as the 512-pixel-width whole-row implementation.

I also decided to drop the fully deferred rendering idea for now - the cost of the sorting required in the rasteriser is putting me off. But It's something I can add later with most work required isolated in the rasteriser code.i

I'm still using the same topology as in the previous post with 3x rasterisers each feeding 4x fragment processors; the main driving factor for that split is the memory requirements of each stage and trying to have as many fragment processors in a round-number of cores as possible. The fact that it should route well though the mesh was mostly just a nice bonus. I'm just hoping at this point that this is also a reasonable work-balance fit as well. Because the rasteriser is going to be a fixed-function unit i'm trying to use as much of it's resources as possible, i'm sitting on around 27K of the RAM used total but I might be able to get that "a lot" higher with a bit of effort+luck.

So as of now I have a simple streaming protocol for the fragment shaders using an ezeport to arbitrate each individual fragment; this has a high(ish) overhead but it could be batched up by rows per processor. The primitive is fully rasterised across the 4 target tiles into a list of active fragments (x, y, w) - 8 bytes each. The w value of all are inverted together and then the fragments are streamed to the fragment processors with a bit of protocol compaction to reduce the transfer size and buffers required ('update y & prim id' message, 'render @ x' message). The work is streamed by row so interleaves across the 4x fragment processors - with enough buffer space (i.e. at least 64 fragments) should allow for some pipelining to hide latency across each 5-core rasteriser+fragment processor sub-system so long as the fragment shaders have enough work to perform.

Well that's where i'm at for the day. I haven't implemented the fragment shaders in the fragment processor or some of the global state broadcast from the controller. But having single messages to core 0,0 being exploded into a whole cascade of work across the mesh which is a pretty big step.

(It didn't quite go as smoothly as that suggests as I hit a bug in libezehost when dealing with heterogeneous workgroups which was a little frustrating till I worked out what was going on).

Update: Another day another bit of progress. Today I hooked up a fragment shader to the rasteriser and got it to render the single triangle test. At this point it's probably a bit slower than the previous code but there is more optimisation to be done.

I had to engineer a bit more of a streaming protocol between the rasteriser and the fragment shader; so I took the opportunity to batch up rows so they can be more efficiently written and read. I added some control codes in there as well for communicating other state and parameterising some of the processing.

I'm still not that happy with the way the rasteriser is forming the fragments: the actual rasterisation process is clean/simple but it has to output the fragments to a combined staging buffer across all tiles which must then be post-processed and broken into chunks for the 4x fragment processors. Having 4x tiles across makes the queue addressing calculation overly complex (in a loop of about 15 instructions almost anything is overly complex). As I am no longer doing deferred rendering without changing the current stream protocol it is possible to remove all the staging buffers from the rasteriser and just write directly to the stream buffers on the target cores; but I don't have a good solution yet (close though). Although i'm not sure what i'm going to do with the massive 16K x 3 this would free up!

Update: Oh damn. I tried rendering more than one triangle ... yeah its not good. Very slow proportional to the number of rendered (non-z-binned) fragments and at least for this workload the load balancing is also very bad - some cores render a ton of pixels and others render none due to the static scheduling. It looks like i miscalculated the rasteriser to fragment processor balance too; that 4x factor adds up very fast.

I went to my timing tester and did some off-core write tests: It seems i misunderstood the overhead of direct off-core writes from the EPUs - they seem to take a fixed (and unaccounted?) 9 cycles even if they "don't block". Yeah that's not going to cut it for this task. DMA seems to be able to get this down to about 1.7 cycles per float but the real benefit is that the epu runs independently and that easily outstrips the data generation rate. But it's going to need some bulky and hairy code to manage across multiple cores which is going to eat into any benefits. This definitely rules out a couple of ideas I had.

Hmm, maybe mk iii is closer than i thought. Perhaps just start with tiles so the output size is flexible and add some dynamic load balancing and a 2D primitive index. Perhaps group 2-4 cores together in terms of the front-end to try to deal with the primitive bandwidth issue; unless that upsets the balancing too much.

ezegpu mk i

Yesterday afternoon I started to clean up the current rasterisation code in order to dump to another point release of ezesdk. After hitting some hardware issues I found a good-enough workaround (for now) and this morning came up with a slightly more taxing/useful example for some more realistic profiling.

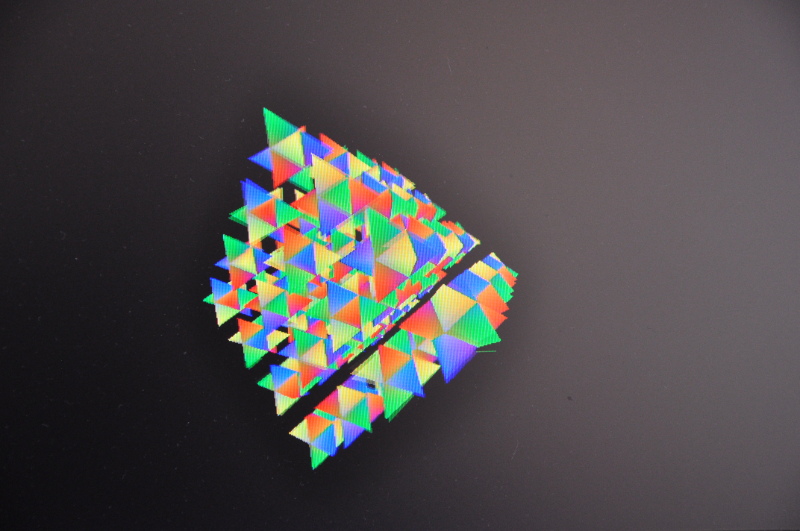

(imagine each is rotating on its centroid independently and all 64 are rotating around together, playfield is 512x512x32-bit)

Here's it's running on a single ARM core at about 30fps (but don't read too much into this since it isn't arm optimised). The main visible rendering artefact is a screen tear. The epiphany can only manage 43fps on this one - so as i'm adding more geometry to the scene it's performance over the arm is dropping (it's about 3x with a single star).

The loading of the primitives is becoming a bottleneck I always knew it was: I know this because if i zoom in closer the epiphany drops to 33fps but the arm chugs right down to about 15. So at least that is something I guess. OTOH I'm only uising one arm core. I can have two running with little impact on each other. Actually I had 3 outputs running at once with little impact on each other (one epiphany and two arms) which was starting to get a little bit impressive to me - combining them all together with a bit of NEON would provide a meaningful boost if they had nothing better to do.

But the problem is that currently each core runs the same code. Each row is rendered completely which involves scanning all the primitives in that band and rendering them. The sequence is essentially:

clear colour and zwbuffer

for each primitive

for bounding box

interpolate edge functions, z/w, 1/w

if inside triangle and zbuffer test passes

save new zbuffer value

save 1/w and x location

fi

rof

for saved 1/w values

calculate reciprocal

rof

for saved fragments

render fragment to colour buffer

rof

rof

for each pixel

scale/clamp

output

rof

The primitives include the 3 float values for each of the 3 edge functions, the 1/w interpolator, the z/w interpolator, and the 3 colour channels: and all this data is being loaded each time through each row through each core - i.e. at least N cores per primitive (i'm using 12 to work around some stability issues and its enough to saturate the bus handily anyway) and another multiplying factor for the number of bands their bounding box crosses. With a bounding box and control word this is 136 bytes per primitive and it adds up very fast - to multiple megabytes.

I knew this was a bottleneck but I didn't (and still don't) have a feel yet for how much work a real fragment shader is going to be. But i'm pretty sure you'll be doing interesting stuff and still not hiding this.

Despite everything being on the core there is still plenty of space left, although 512 pixels is a little on the narrow side.

ezegpu mk ii

While waking up this morning I had a few ideas that might be able to address this and hope to implement in the coming days and weeks depending on motivation (i'll have some time due to another fortunate break in work). This is still just the first shot and I haven't tested any of them with real code; so as I discover problems I may need to alter the plans - although i do seem to be approaching the original ideas I had. This whole thing is a journey for me as the last time I did any "serious" 3D was using assembly language on an Amiga and it was pretty shit really. I don't have any expectations or baggage from the last 20 years of gpu progress and have no end-goal in mind (so if you're reading this and shaking your head with all the mistakes i'm making; well yes, i just don't know what i'm doing).

So these are a grab bag of ideas just off the top of my head right now and not all of them are compatible with each other.

- Use core 0,0 as a management/controller. It is the only one which reads primitives from main memory providing a 'bandwidth multiplier' of 12x.

- Break the primitives into two parts. The first part is the data required for the rasteriser: bounding box, edge equations and z/w equation. The 1/w equation can be created from the edge equations. This can fit into 64 bytes. The second part is for the fragment shaders and is only needed if the fragment is shaded.

- Deferred rendering. A (primid,x,w) tuple per pixel is enough to be able to render it later. This drastically reduces how often the fragment shaders are executed - only once per pixel.

- Deferred rendering allows the floating point "framebuffer" to be moved to registers(!).

- Deferred rendering also reduces fragment code loading to once-per row, and varying equation loading to once per primitive per row.

- Split the zwbuffer test and fragment generation from the rendering. This allows multiple rows to be rendered for each primitive which saves some data transfer and setup costs. The zbuffer becomes multi-row but lives in fewer cores freeing up resources elsewhere. Due to the mesh network design output of the currently fragment candidate can be written to the fragment shader cores without the need for any arbitration - at the same speed as a local write (if my understanding of the hardware is correct).

So putting most of that together this the current image forming in my head:

+------+ +------+ +------+ +------+

| CTRL | | FR00 | | FR10 | | FR20 |

+------+ +------+ +------+ +------+

||| | | |

+------+ +------+ +------+ +------+

| REZ0 |--o| FR01 | | FR11 | | FR21 |

+------+ +------+ +------+ +------+

|| | | |

+------+ +------+ +------+ +------+

| REZ1 |- | FR02 | --o| FR12 | | FR22 |

+------+ +------+ +------+ +------+

| | | |

+------+ +------+ +------+ +------+

| REZ2 |- | FR03 | -- | FR13 | --o| FR23 |

+------+ +------+ +------+ +------+

This is arranged assuming the mesh goes across rows first (i think it does) so all writes between cores should never block. REZ0 only writes to FR0x, REZ1 only writes to FR1x, etc.

- CTRL

- Main controller/primitive reader. This isn't actually much work and it leaves room/time for other functional blocks such as caches. It reads the primitives for each band and then copies them to the rasterisers. The bands will be indexed (or populated) in rows of 12. It could also be in charge of writing rendered pixels from the memory of the fragment shader cores to the framebuffer as an easy way to serialise (optimise) the writes.

- REZ0-2

- Rasteriser - edges and zwbuffer. These rasterise and perform zbuffering on 12 rows at once (4 rows each). It can send the (primid, x,1/w) tuple to the fragment processors using a single 8-byte, non-blocking, non-arbitrated(!) write. This is just splitting the first inner loop into a separate processor.

- FRXY

- Fragment processors. Whilst the rasteriser is populating the next row of input the fragment processor is rendering the deferred pixels. This doesn't need a floating point framebuffer since each pixel is only rendered once. Also means it doesn't need to clear it (ok; alpha blending would affect both of these but it affects the whole pipeline). The reciprocal pass will probably go here and the fact that it only needs to run once per visible pixel is another bonus from deferred rendering (although some reverse painters algorithm would also help the number of times a pixel makes it to the fragment processor in the mk i design).

The controller and fragment processors can be further pipelined internally to employ scatter-gather DMA to reduce latency effects.

This all looks pretty complicated but it should be a fairly modest amount of code - it has to be otherwise it wont fit! Actually by using deferred rendering and splitting the stuff up I will have big chunks of memory to spare; I could probably up the maximum rasterisation width to 1024 pixels although now i think about it that's too big for the memory speed. Something in the middle is more likely to be useful.

Because there are now different parts doing different things the differences in runtime of each component will start to dictate the total system performance (and hopefully not the read memory bandwidth). I don't know yet what that will be and it will depend on the rendering task and fragment shaders. If for example the fragment shaders are complicated and dominating execution time then scaling/clamping of the output, and/or reciprocal of the input could be moved elsewhere memory permitting.

It lives!

Oops, wrong stride.

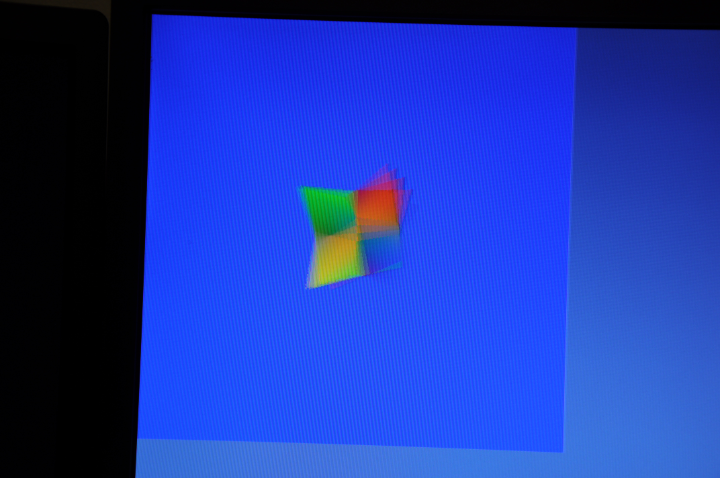

It lives!

I found the 1/2 hour required to hook up the epiphany rasteriser tonight.

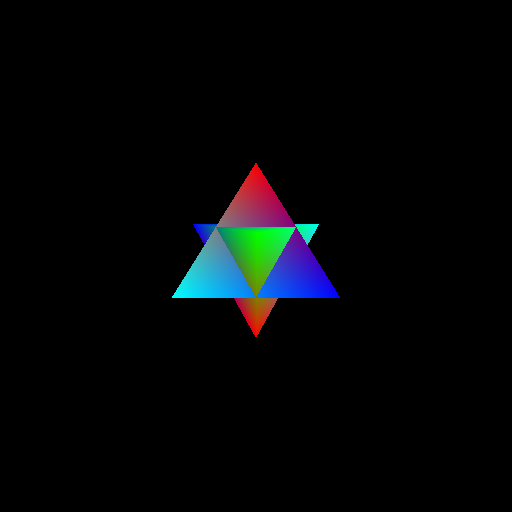

Fun facts for that rotating double-triangular pyramid:

- On Kaveri single-core 1000 frames takes 1.8s;

- On Zynq ARM single-core 1000 frames takes 14.0s;

- On epiphany-16 1000 frames takes 6.0s.

The epiphany should scale much better than the ARM, but I don't feel like poking more tonight.

Gawd i just realised that screenshot looks way too much like the damn windoze logo. Just an unfortunate coincidence as the colours were just the primaries and the background colours are supposed to be Commodore-64 like (the camera isn't picking them up very well).

The lack of any vblank interrupt in the video hardware ... well that's very uninspiring too (not that it should really come in to play, but it's the principal of the thing).

Update: Ok I had a tiny play. If I scale the model transform by 2x the times go to 2.6s, 23.5s, and 7.2s. i.e. much better scalability on the epiphany as expected.

Another rotation and scale invariant feature descriptor?

Just had an idea whilst waking up this morning so I punched out a quick couple hundred lines of code before lunch.

I guess it works?

This is just the first output, without any tuning or much mucking about.

It uses LOG to determine the scale-invariant feature locations and guassian edge detectors to determine the rotation invariance. A local binary pattern is used for the feature descriptor.

Not sure if someone else has taken the same approach (the scale/rotation variance is a standard technique), possibly worth writing this one up if not.

Worth a beer at any rate. Cheers big ears.

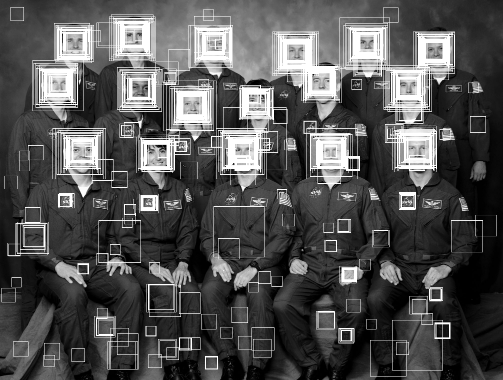

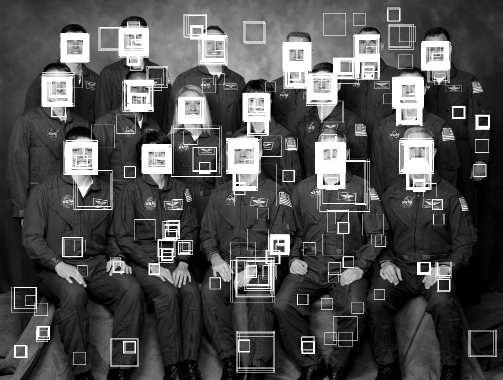

A weak face detector?

First, here is the raw output from one of the haar cascades included in OpenCV (frontalface-alt) using the Viola & Jones object detection algorithm. This is a 20x20 face detector which requires 2135 feature tests via 4630 individual rectangles and approximately 200KB of constant data storage at runtime assuming optimal data packing.

It is being executed over a good number of steps over range of scales and tested at every pixel location of the given scale.

To be usable as a robust face detector further post-processing on the raw hit rectangles must be performed and then further work is needed to weed out false positives such as the NASA logo or hands which would still pass this process. Together with a wise choice of search scale.

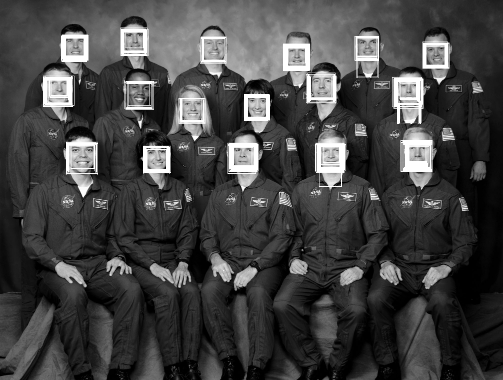

The following is the raw output from a short training run of the fodolc algorithm over the same scale range. This is a 20x20 face detector which requires exactly 400 tests of a 4 bit local binary pattern (LBP) encoded image and 800 bytes of data storage at runtime. This is using a loose threshold to give comparable results to the haar cascade and to see what sort of features give false positives.

Unlike the haar cascade the output is simply a distance. This can simplify the post-processing to a threshold and basic non-minimal suppression trough detection.

As an example the following the raw output from the same fodolc detector but with a tighter threshold.

There are still some false positives but obviously it is something of an improvement - trivial trough detection would clean it up to a point exceeding the result of the haar cascade.

This is only a weak classifier taken from just the first 15 minutes or so of classifier training on 10 000 training images extracted or synthesised solely from 880 portrait photographs. Longer training always generates a better detector. A higher quality training set should generate a better detector. Training is by a very basic genetic algorithm.

In Java on a beefy workstation the execution time is roughly the same between the two algorithms but the fodolc algorithm can be implemented in efficient SIMD or OpenCL/GPU with very little effort for significant (order of magnitude) gains.

The following is the entire code of the classifier outside of the LBP conversion and of course the classifier table itself.

// Can you copyright something so simple??

public class Classify {

private final static short[] face = { ... };

public int score(byte[] lbp, int stride, int xi, int yi) {

int score = 0;

for (int y=0,i=0;y<20;y++)

for (int x=0;x<20;x++,i++)

score += (face[i] >>> lbp[x+xi+(y+yi)*stride]) & 1;

return score;

}

}

Fast Face Detection in One Line of Code has a link to an unpublished paper with brief overview of the algorithm and local binary pattern used.

Please comment on this post if you think this is interesting. Or even if you're just as dumbfounded as I am that something so simple could possibly work.

The face detector was trained using images from the FERET database of facial images collected under the FERET program, sponsored by the DOD Counterdrug Technology Development Program Office (USA).

http://www.zedzone.au/blog/2014/08/a-weak-face-detector.html

epiphany gpu and bits

Work was a bit too interesting this week to fit much else into my head so I didn't get much time to play with the softgpu until today.

This morning i spent a few hours just filling out a basic GLES2 style frontend (it is not going to ever be real GLES2 because of the shader compiler thing).

I had most of it for the Java SoftGPU code but I wanted to make some improvements and the translation to C always involves a bit of piss farting about fixing compile errors and runtime bugs. Each little bit isn't terribly big but it adds up to quite a collection of code and faffing about - i've got roughly 4KLOC of C and headers just to get to this point and double that in Java that I used to prototype a few times.

But as of an hour or two ago I have just enough to be able to take this code:

int main(int argc, char **argv) {

int res;

struct matrix4 m1, m2;

res = fb_open("/dev/fb0");

if (res == -1) {

perror("Unable to open fb");

return 1;

}

pglInit();

pglSetTarget(fb_getFrameBuffer(), fb_getWidth(), fb_getHeight());

glViewport(0, 0, 512, 512);

glEnableVertexAttribArray(0);

glEnableVertexAttribArray(1);

glVertexAttribPointer(0, 4, GL_FLOAT, GL_TRUE, 0, star_vertices);

glVertexAttribPointer(1, 3, GL_FLOAT, GL_TRUE, 0, star_colours);

matrix4_setFrustum(&m1, -1, 1, -1, 1, 1, 20);

matrix4_setIdentity(&m2);

matrix4_rotate(&m2, 45, 0, 0, 1);

matrix4_rotate(&m2, 45, 1, 0, 0);

matrix4_translate(&m2, 0, 0, -5);

matrix4_multBy(&m1, &m2);

glUniformMatrix4fv(0, 1, 0, m1.M);

glDrawElements(GL_TRIANGLES, 3*8, GL_UNSIGNED_BYTE, star_indices);

glFinish();

fb_close();

return 0;

}

And turn it into this:

The vertex shader will run on-host and the code for the one above is:

static void vertexShaderRGB(float *attrib[], float *var, int varStride, int count, const float *uniforms) {

float *pos = attrib[0];

float *col = attrib[1];

for (int i=0;i<count;i++) {

matrix4_transform4(&uniforms[0], pos, var);

var[4] = col[0];

var[5] = col[1];

var[6] = col[2];

var += varStride;

pos += 4;

col += 3;

}

}

I'm passing the vertex arrays as individual elements in the attrib[] array: i.e. array[0] is vertex array 0 and the size matches that set by the client code. For output, var[0] to var[3] is equivalent of "glPosition" and the rest are "user set" varyings. The vertex arrays are being converted to float1/float2/float3/float4 before it being called (actually only GL_FLOAT is implemented anyway) so they are not just the raw arrays.

I'm doing it this way at present as it allows the draw commands to iterate through the arrays in the presumably long dimension of the number of elements rather than packing across the active arrays. It can also allow for NEON-efficient shaders if the data is set-up in a typical way (i.e. float4 data) and because all vertices are processed in a batch.

For glDrawElements() I implemented the obvious optimisation in that it only processes the vertices indexed by the indices array and only once per unique vertex. The processed vertices are then expanded out using the indices before being passed to the primitive assembler. So for the triangular pyramid i'm using 8 input vertices to generate 24 triangle vertices via the indices which are then passed to the primitive assembler. Even a very simple new-happy prototype of this code in my Java SoftGPU led to a 10% performance boost of the blocks-snake demo.

But I got to the point of outputting a triangle with perspective and thought i'd blog about it. Even though it isn't very much work I haven't hooked up the epiphany backend yet, i'm just a bit too bloody tired and hungry right now. For some reason spring means i'm waking up way too early, not sure why i'm so hungry after a big breakfast, and the next bit has been keeping my head busy all week ...

fodolc

I've been playing quite a bit with my object detector algorithm and I came up with a better genetic algorithm for training it - and it's really working quite well. Mostly because the previous algorithm just wasn't very good and tended to get stuck in a monoculture due to the way it pooled the total population rather than separating the generations. In some cases I'm getting better accuracy and similar robustness as the viola & jones ('haarcascade') detectors, although i haven't tested it widely..

In particular I have a 24x16 object detector which requires only 768 bytes of classifier data (total) and a couple of lines of code to evaluate (sans the local binary pattern setup which isn't much more). It can be trained in a few hours (or much faster with OpenCL/GPU) and whilst not as robust as little as 100 positive images are enough to get a usable result. The equivalent detectors in OpenCV need 300K-400K of tables - at the very least - and that's after a lot of work on packing them down. I'm not employing boosting or validation set feedback yet - mostly because I don't understand it/can't get it to work - so maybe it can be improved.

Unlike other algorithms i'm aware of every stage is parallel-efficient to the instruction level and I have a NEON implementation that classifies more than one pixel per clock cycle. I may port it to parallella, I think across the 16 cores I can beat the per-clock performance of NEON but due to it's simplicity bandwidth will be the limiting factor there (again). At least the classifier data and code can fit entirely on-core and leave a relative-ton of space for image cache. It could probably fit into the FPGA for that matter. I might not either, because I have enough to keep me busy and other unspecified reasons.

asm vs c II

I dunno, i'm almost lost for words on this one.

typedef float float4 __attribute__((vector_size(16))) __attribute__((aligned(16)));

void mult4(float *mat, float4 * src, float4 * dst) {

dst[0] = src[0] + mat[0];

}

notzed@minized:src$ make simd.o

arm-linux-gnueabihf-gcc -c -o simd.o simd.c -O3 -mcpu=cortex-a9 -marm -mfpu=neon

notzed@minized:$ arm-linux-gnueabihf-objdump -dr simd.o

simd.o: file format elf32-littlearm

Disassembly of section .text:

00000000 :

0: f4610aef vld1.64 {d16-d17}, [r1 :128]

4: ee103b90 vmov.32 r3, d16[0]

8: edd07a00 vldr s15, [r0]

c: e24dd010 sub sp, sp, #16

10: ee063a10 vmov s12, r3

14: ee303b90 vmov.32 r3, d16[1]

18: ee063a90 vmov s13, r3

1c: ee113b90 vmov.32 r3, d17[0]

20: ee366a27 vadd.f32 s12, s12, s15

24: ee073a10 vmov s14, r3

28: ee313b90 vmov.32 r3, d17[1]

2c: ee766aa7 vadd.f32 s13, s13, s15

30: ee053a90 vmov s11, r3

34: ee377a27 vadd.f32 s14, s14, s15

38: ee757aa7 vadd.f32 s15, s11, s15

3c: ed8d6a00 vstr s12, [sp]

40: edcd6a01 vstr s13, [sp, #4]

44: ed8d7a02 vstr s14, [sp, #8]

48: edcd7a03 vstr s15, [sp, #12]

4c: f46d0adf vld1.64 {d16-d17}, [sp :64]

50: f4420aef vst1.64 {d16-d17}, [r2 :128]

54: e28dd010 add sp, sp, #16

58: e12fff1e bx lr

notzed@minized:/export/notzed/src/raster/gl/src$

I thought that the store/load/store via the stack was a particularly cute bit of work, especially given the results were already in the right order and in adequately aligned registers. r3 also seems a little too popular.

I guess the vector extensions to gcc just aren't finished - or just don't work. Maybe I used the wrong flags or my build is broken. It produces similar junk code for the epiphany mind you. I've never really tried using them but after a bunch of OpenCL in the past I thought it might be worth a shot to access SIMD without machine code.

My NEON is very rusty but I think it could be something like this:

notzed@minized:src$ arm-linux-gnueabihf-objdump -dr neon-mat4.o

neon-mat4.o: file format elf32-littlearm

Disassembly of section .text:

00000000 :

0: f4a02caf vld1.32 {d2[]-d3[]}, [r0]

4: f4210a8f vld1.32 {d0-d1}, [r1]

8: f2000d42 vadd.f32 q0, q0, q1

c: f4020a8f vst1.32 {d0-d1}, [r2]

10: e12fff1e bx lr

As can be seen from the names I started with a "simple" matrix multiply but whittled it down to something I thought the compiler could manage after seeing what it did to it - this is just a meaningless snippet.

After a pretty long day at work I was just half-heartedly poking at filling out the frontend to the epiphany gpu but just got distracted by whining at the compiler again. I should've just started with NEON, after a little poking I remembered how nice it was.

And I thought I hated tooltips ...

Found on some m$ site whilst looking for how to turn off tooltips:

I've spent over 10 hours the last two days trying to solve the same problem. Apparently it is impossible to get rid of tooltips in Win7, which makes it absolutely unusable for me. I've wasted my time. I've wasted my money. Just installed Ubuntu which doesn't cost a penny and with one checkmark I get to disable all tooltips. With Microsoft I get to pay $100 for something that seems intentionally designed for maximum annoyance. Goodbye Microsoft. Last dime you ever got from me.

Had a laugh then thought fucking around in regedit wasn't worth it and so left it at that.

But yeah tooltips suck shit. If your GUI needs tooltips to be usable, it just needs bloody fixing. Icons just don't work when you've got more than a dozen or so choices (not much does for a human).

Copyright (C) 2019 Michael Zucchi, All Rights Reserved.

Powered by gcc & me!