About Me

Michael Zucchi

B.E. (Comp. Sys. Eng.)

also known as Zed

to his mates & enemies!

< notzed at gmail >

< fosstodon.org/@notzed >

More video player work

Mucked around with a few things in JJPlayer last night and this morning. Nothing on TV last night and I just wanted a couple of hours today.

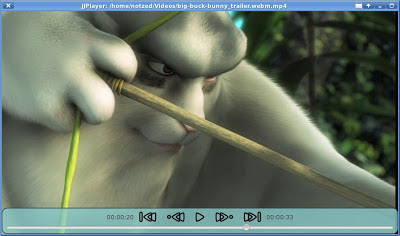

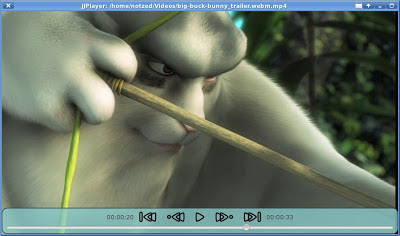

The new buttons and styling.

There were a few annoying things along the way. The keyboard input was a bit of a pain to get working, I had to override Slider.requestFocus() so it wouldn't grab keyboard focus when used via the mouse (despite not being in the focus traversal), and I added a 'glass-pane' over the top of the window to grab all keyboard events. I had to remove all buttosn from the focus traversal group and only put the 'glass-pane' in it. I made the glass-pane mouse-transparent so the buttons still work.

Another strange thing was full-screen mode. Although JavaFX captures ESC to turn it off, the ESC key event still ends up coming through to my keyboard handler. i.e. I have to track the fullscreen state separately and quit only if it's pressed twice.

Next to fix is those a/v sync issues ...

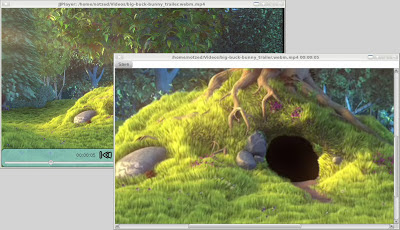

Update Well instead I added a frame capture function. Hit print-screen and it captures the currently displayed frame (raw RGB) and opens a pannable/zoomable image viewer. From here the image can be saved to a file.

Although I haven't implemented it, one could imagine adding options to automatically annotate it in various ways - timestamp, filename, and so on.

Update 2: I subsequently discovered "accelerators", so i've changed the code to use those instead of the 'glass pane' approach - a ton of pointless anonymous inner Runnables, but it feels less like a hack.

scene.getAccelerators().put(new KeyCombination(...), ...);

Update 3: I tried it on my laptop which is a bit dated and runs 32-bit fedora with a shitty Intel onboard GPU (i.e. worthless). It runs, but it's pretty inefficient - 2-4x higher load than mplayer on same source. Partly due to Java2D pipeline I guess but it's probably all the excess frame copying and memory usage. I tried to do some profiling on the workstation but didn't have much luck. Just seems to be spending most of it's time in Gtk_MainLoop, although the profiler doesn't seem to know how to sort properly either.

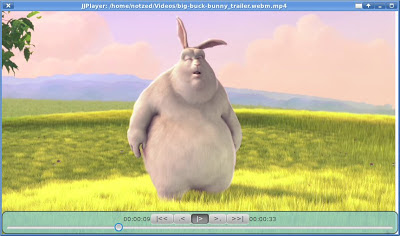

JJPlayer controls

This morning I added sound and started work on some controls for the JavaFX version of JJPlayer.

It's mostly pretty buggy but it kind of works ... some of the time.

I got JavaSound working easily enough at least - although i'm just converting to stereo 16-bit for now as I did on Android.

But there are some big problems with the way i'm handling the a/v sync when pause or seeking, so things get messed up some of the time. Too lazy to fix it right now ...

I kept playing a bit with the UI and added a fade in/out of the controls as well as hiding the mouse pointer, and some stylesheet stuff.

Clearly the styling and the ASCII-icon buttons both leave something to be desired at this point.

I thought i'd have trouble getting the hiding to reverse if the user started moving the mouse whilst it was hiding, but it turned out to be pretty easy. Just change the rate on the fading out animation to reverse it, and it still runs to completion at the other end instead. So it fades in and out smoothly depending on user action, and without any eye-jars.

JJPlayer for JavaFX

I finally had enough of Android and started poking at a JavaFX version of JJPlayer this morning.

I'm hoping that working on both of them i'll be able to fine tune the design a bit and help with debugging - e.g. i already fixed the end-of-file bugs and "discovered" the av_frame_get_best_effort_timestamp() function (guh, what a name, bad memories of GNOME dev flooding back).

So far I just have unscaled video going (no sound). There are no controls but I should think JavaFX will make that pretty easy to add (and an opportunity to add some bling). But it can play multiple videos in sequence and the window sizes to fit without eye-jars. Once I get sound going i'll look at filling out the GUI for it - I prefer the OpenAL API but I might try with JavaSound this time.

Performance seems ok, although my first measurements had it on par with mplayer with the GL backend but that seemed to be a specific video (actually it's a touch lower cpu usage on that video). Uses 3-4x memory, but that's cheap isn't it? Unfortunately as one can only write RGB data to WritableImage's, it has to do a YUV-RGB step separately and then perform a redundant copy but there's not much option there.

No screenshot yet as it's pretty basic ...

The code (GPL3) will be in the jjmpeg-1.0 branch of jjmpeg by the time you read this or shortly thereafter inside jjmpeg-javafx.

JJPlayer 1.0-a1

I decided I had enough hacked into the JJPlayer code to warrant a release - this one is actually (somewhat) usable as a player now. Unlike the previous which were totally broken. I'm getting a bit bored with it so it seemed as good a point as any.

My ainol elf 2 can manage a test 720p h.264 file pretty well - with only the occasional group of stutters on complex scenes with an eventual catch up. Unfortunately the Mele can barely handle PAL MPEG ... Update: I was working on some decoding-skipping throttle code, and for whatever reason, the Mele can now handle PAL MPEG just fine (nothing to do with the new code). No idea ... maybe i/o related - the Mele seems to go funny after suspend too.

I managed to fit in a few useful things in no particular order:

- Runs full-screen - no navigation or title bars (they return temporarily on touch);

- Busy spinner when opening;

- Open is performed asynchronously;

- Seek mostly works;

- Much better stability;

- Display of frames can be dropped to try to catch up;

- Video is synced to the audio and usually works (it doesn't drift at any rate);

- Basic Android activity lifecycle stuff works, pause/resume, etc;

- Improved the startup time;

- Keeps the screen turned on (i think);

- Some developer oriented debug stuff; and

- A preferences page.

There are still some unsolved issues wrt audio sync, pixels are assumed square, the possibility of more aggressive frame dropping (i.e. not decoding B frames and/or I frames, etc), very inefficient memory use (e.g. i have 31 AVFrame buffers and 31 YUV textures although I only need 2 of the latter), performance, jarring UI manoeuvres when the UI is shown/hidden, and many others I can't be bothered enumerating at this point. And that doesn't include the 'missing features' like a pause button or subtitles.

Most of the Android specific code is a huge mess - experiments are still on-going.

See the downloads link in the jjmpeg project.

But if you're after a more polished free software Android video player, go look at Dolphin Player. Although that seems to have some audio glitches and drift, and is implemented afaict as a native SDL app ported to Android rather than as a Java player.

Whilst writing this my ADSL network went down - probably heat related - so maybe thats a hint to go drink beer instead of hack. Might start with the coffee i brewed and forgot to drink this morning - that'll make a nice iced coffee. Mmm, blenders.

We're in the middle of a heat-wave and I should probably be at the beach or something - but the house is (relatively) cool and I can get out and water the garden to keep it alive here ... meant to be 44 degrees today, but that isn't even enough to get a record. Yesterday I got out an IR thermometer and measured 75 degrees on the lid of a plastic green sulo bin that isn't even in full-sun all day. Today has the added bonus of being windy too - feels like being inside a fan-forced oven when you walk outside. Maybe it's better not to go riding in such weather ...

NEON YUV vs GPU

This morning I did some experiments with Android and the YUV code - although patience is wearing thin for such a shitty alternative to GNU/Linux that Android is. As icing on the cake most of the android developer site just doesn't render on most of my browsers anymore - I just get junk. Well I can always go elsewhere with my spare time ...

I changed the code to perform a simple doubling up of the U and V components without a separate pass, and changed to an RGB 565 output stage and embedded it into the code in another mess of crap. Then I did some profiling - comparing mainly to the frame-copying version.

Interestingly it is faster than sending the YUV planes to the GPU and using it to do the YUV conversion - and that is only including the CPU time for the frame copy/conversion, and the texture load. i.e. even using NEON it uses less CPU time (and presumably much less GPU time) even though it's doing more work. The volume of texture memory copied is also 33% more for the RGB565 case vs YUV420p one.

Still, 1ms isn't very much out of 10 or so.

The actual YUV420p to RGB565 conversion is only around 1/2 the speed of a simple AVFrame.copy() - ok considering it's writing 33% more data and I didn't try to optimise the scheduling.

Stop press Whilst writing this I thought i'd look at the scheduling and also using the saturating left shift to clamp the values implicitly. Got the inner loop down from 54 to 35 cycles (according to the cycle counter), although it only runs about 10% faster. Better than a kick in the nuts at any rate. Fortunately due to the way I already used registers I could decouple the input loading/formatting from the calculations, so i simply interleaved the next block of data load within the calculations wherever there were delay slots and only made the data loading conditional.

The (unscheduled) output stage now becomes:

@ saturating left shift automatically clamps to signed [0,0xffff]

vqshlu.s16 q8,#2 @ red in upper 8 bits

vqshlu.s16 q9,#2

vqshlu.s16 q10,#2 @ green in upper 8 bits

vqshlu.s16 q11,#2

vqshlu.s16 q12,#2 @ blue in upper 8 bits

vqshlu.s16 q13,#2

vsri.16 q8,q10,#5 @ insert green

vsri.16 q9,q11,#5

vsri.16 q8,q12,#11 @ insert blue

vsri.16 q9,q13,#11

vst1.u16 { d16,d17,d18,d19 },[r3]!

Which saves all those clamps.

As suspected, the 8 bit arithmetic leads to a fairly low quality result, although the non-dithered RGB565 can't help either. Perhaps using shorts could improve that without much impact on performance. Still, it's passable for a mobile device given the constraints (and source material), but it isn't much chop on a big tv.

Of course, all this wouldn't be necessary if one had access to the overlay framebuffer hardware present on pretty well all ARM SOCs ... but Android doesn't let you do that does it ...

Update: I've checked a couple of variations of this into yuv-neon.s, although i'm not using it in the released JJPlayer yet.

Mele vs Ainol Elf II

The Elf is much faster than the Mele at almost everything - particularly video decoding (which uses multiple threads), but pretty much everything else is faster (Better memory? The Cortex-A9? The GPU?) and with the dual-cores means it just works a lot better. Can't be good for the battery though.

(as an aside, someone who spoke english should've told the guys in China that "anal elf 2" is probably not a good name for a computer!)

But the code is written with multiple cores in mind - demux, decoding of video and audio, and presentation is all executed on separate threads. Having all of the cpu-bound tasks executed in a single thread may help on the Mele, although by how much I will only know if and when I do it ...

NEON yuv + scale

Well I still haven't checked the jjmpeg code in but I did end up playing with NEON yuv conversion yesterday, and a bit more today.

The YUV conversion alone for a 680x480 frame on the beagleboard-xm is about 4.3ms, which is ok enough. However with bi-linear scaling to 1024x600 as well it blows out somewhat to 28ms or so - which is definitely too slow.

Right now it's doing somewhat more work that it needs to - it's scaling two rows each time in X so it can feed into the Y scaling. Perhaps this could be reduced by about half (depending on the scaling going on), which might knock about 10ms off the processing time (asssuming no funny cache interactions going on) which is still too slow to be useful. I'm a bit bored with it now and don't really feel like trying it out just yet.

Maybe the YUV only conversion might still be a win on Android though - if loading an RGB texture (or an RGB 565 one) is significantly faster than the 3x greyscale textures i'm using now. I need to run some benchmarks there to find out how fast each option is, although that will have to wait for another day.

yuv to rgb

The YUV conversion code is fairly straightforward in NEON, although I used 2:6 fixed-point for the scaling factors so I could multiply the 8 bit pixel values directly. I didn't check to see if it introduces too many errors to be practical mind you.

I got the constants and the maths from here.

@ pre-load constants

vmov.u8 d28,#90 @ 1.402 * 64

vmov.u8 d29,#113 @ 1.772 * 64

vmov.u8 d30,#22 @ 0.34414 * 64

vmov.u8 d31,#46 @ 0.71414 * 64

The main calculation is calculated using 2.14 fixed-point signed mathematics, with the Y value being pre-scaled before accumulation. For simplification the code assumes YUV444 with a separate format conversion pass if required, and if executed per row should be cheap through L1 cache.

vld1.u8 { d0, d1 }, [r0]! @ y is 0-255

vld1.u8 { d2, d3 }, [r1]! @ u is to be -128-127

vld1.u8 { d4, d5 }, [r2]! @ v is to be -128-127

vshll.u8 q10,d0,#6 @ y * 64

vshll.u8 q11,d1,#6

vsub.s8 q1,q3 @ u -= 128

vsub.s8 q2,q3 @ v -= 128

vmull.s8 q12,d29,d2 @ u * 1.772

vmull.s8 q13,d29,d3

vmull.s8 q8,d28,d4 @ v * 1.402

vmull.s8 q9,d28,d5

vadd.s16 q12,q10 @ y + 1.722 * u

vadd.s16 q13,q11

vadd.s16 q8,q10 @ y + 1.402 * v

vadd.s16 q9,q11

vmlsl.s8 q10,d30,d2 @ y -= 0.34414 * u

vmlsl.s8 q11,d30,d3

vmlsl.s8 q10,d31,d4 @ y -= 0.71414 * v

vmlsl.s8 q11,d31,d5

And this neatly leaves the 16 RGB result values in order in q8-q13.

They still need to be clamped which is performed in the 2.14 fixed point scale (i.e. 16383 == 1.0):

vmov.u8 q0,#0

vmov.u16 q1,#16383

vmax.s16 q8,q0

vmax.s16 q9,q0

vmax.s16 q10,q0

vmax.s16 q11,q0

vmax.s16 q12,q0

vmax.s16 q13,q0

vmin.s16 q8,q1

vmin.s16 q9,q1

vmin.s16 q10,q1

vmin.s16 q11,q1

vmin.s16 q12,q1

vmin.s16 q13,q1

Then the fixed point values need to be scaled and converted back to byte:

vshrn.i16 d16,q8,#6

vshrn.i16 d17,q9,#6

vshrn.i16 d18,q10,#6

vshrn.i16 d19,q11,#6

vshrn.i16 d20,q12,#6

vshrn.i16 d21,q13,#6

And finally re-ordered into 3-byte RGB triplets and written to memory. vst3.u8 does this directly:

vst3.u8 { d16,d18,d20 },[r3]!

vst3.u8 { d17,d19,d21 },[r3]!

vst4.u8 could also be used to write out RGBx, or the planes kept separate if that is more useful.

Again, perhaps the 8x8 bit multiply is pushing it in terms of accuracy, although it's a fairly simple matter to use shorts instead. If shorts were used then perhaps the saturating doubling returning high half instructions could be used too, to avoid at least the input and output scaling.

Stop Press

As happens when one is writing this kind of thing I noticed that there is a saturating shift instruction - and as it supports signed input and unsigned output, it looks like it should allow me to remove the clamping code entirely if I read it correctly.

This leads to the following combined clamping and scaling stage:

vqshrun.s16 d16,q8,#6

vqshrun.s16 d17,q9,#6

vqshrun.s16 d18,q10,#6

vqshrun.s16 d19,q11,#6

vqshrun.s16 d20,q12,#6

vqshrun.s16 d21,q13,#6

Which appears to work on my small test case. This drops the test case execution time down to about 3.9ms.

And given that replacing the yuv2rgb step with a memcpy of the same data (all else being equal - i.e. yuv420p to yuv444 conversion) still takes over 3.7ms, that isn't too shabby at all.

RGB 565

An alternative scaling & output stage (after the clamping) could produce RGB 565 directly (I haven't checked this code works yet):

vshl.i16 q8,#2 @ red in upper 8 bits

vshl.i16 q9,#2

vshl.i16 q10,#2 @ green in upper 8 bits

vshl.i16 q11,#2

vshl.i16 q12,#2 @ blue in upper 8 bits

vshl.i16 q13,#2

vsri.16 q8,q10,#5 @ insert green

vsri.16 q9,q11,#5

vsri.16 q8,q12,#11 @ insert blue

vsri.16 q9,q13,#11

vst1.u16 { d16,d17,d18,d19 },[r3]!

jjmpeg android video player work

Yesterday I was too lazy to get out of the house, so after getting over-bored I fired up netbeans and had a poke at the android video player JJplayer again.

And now it's next year.

I tried a few buffering strategies, copying video frames, loading the decoded frames directly with multiple buffers (works only on some decoders), and synchronously loading each decoded frame into a texture as it is decoded. I previously had some code to load the texture on another GL context but I didn't see if it still worked (it was rather slow which is why i let it rot, but it's probably worth a re-visit).

Just copying the raw video frame seems to be the most reliable solution, even with it's supposed overheads. Actually it didn't seem to make much difference to performance how I did it - they all ran with similar cpu time (according to top).

I've looked into doing the scaling/yuv/rg565 conversion in NEON but haven't got any code up and running yet (one has to be a bit keen to get stuck into it). I doubt it will be quicker as this means I wont be using the GPU for this processing, but given how slow the texture loading is it might be a win and it will let me avoid redundant copies.

I also fixed some of the android behavioural stuff - pause/resume pauses/resumes the playback, it runs in full-screen with a hidden 'ui' (although for whatever reason, setting the slider to invisible doesn't always work, and never on the mele), and it now opens the video in another thread with a busy spinner.

Although it's playing back most SD sized videos ok on my (dual core) ainol tablet, it is struggling on the mele. Even when the mele can decode fast enough the timing is funny - jumping around (and oddly, the load average is well over 2 yet it decodes all frames fast enough). I guess the video decode was behind the audio so it was just displaying frames as fast as it decoded them rather than with per-frame timing. So I tried another timing mechanism rather than just using an absolute clock from the first frame decoded, have it based on the audio playback position. This works quite a bit better and lets me add a tunable delay, although it isn't perfect. It falls down when you seek backwards, but otherwise it works reasonably well - and should be fixable. OTOH even with the busted timing the audio sync was consistent - unlike Dolphin Player which loses sync quite rapidly for whatever reason.

Unfortunately opening some videos seems to have become quite slow ... not sure what's going on there, whether it's just slow i/o or some ffmpeg tunable (I was poking around with some other stuff before getting back to jjplayer so i might've left something in). I don't know how to drop the decoding of video frames yet either - which would be useful. I think I need to add some debugging output to the display to see what's going on inside too, and eventually run it in the profiler again when I have most of a day free.

The code is still a bit of a pigs breakfast and I haven't checked it in yet. But i should get to it either today or soon after and will update the project news. I should probably release another package too - as the current one is too broken to be interesting.

In the not too distant future I will probably poke at a JavaFX version as well. If I don't find something better to do ... must really look at the Eiphany SDK sometime.

50K

Sometime in the next 12 hours this blog should breach the 50K pageview tally. So 'yay' for me. Anyway, I keep an eye on the stats just to see what's hot and what's not, and i'll review the recent trends.

So last time I did this the main posts hit were to do with performing an FFT in Java. The new king is 'Kobo Hacking'. I'm pretty sure this is mostly people just trying to hack the DRM or the adverts out, but there does seem to be a few individuals coding for the machine as well. And what little organisation there is seems to be centred on the mobileread forums, although it seems like me it's mostly individuals working on their own for personal education and entertainment.

Another big source of pageviews is the JavaFX stuff (actually it's the biggest combined) - and this is only because they were added to the JavaFX home page (it wasn't something I asked for btw). I must admit i've done a couple of 'page hit' posts under the tag, but it's always just been stuff i've been playing with anyway.

The post about the Mele A2000 gets quite a few hits although i'm not sure what people are still looking up (probably wrt the allwinner A10 for hacking). The ARM android tv boxes keep leaping ahead in performance and specifications and it's already quite dated. I still use mine mainly for playing internet radio, and sometimes watching stuff recorded with MythTV (I normally use the original PS3, but I try to save some power when I remember to).

The Android programming posts get regular visits - although I think it's mostly people looking up stuff they should be able to find in the SDK. Last time I had to do some android code I didn't like it at all - the added api to work around earlier poor api design choices, the lack of documentation, and the frustration with the shitty lifecycle model more than made up for any 'cool' factor. I'd rather be coding JavaFX with real Java - actually the fact that Oracle have an android version internally but are trying to monetise it ... puts me off that idea a bit too. You can't really monetise languages and toolkits anymore, there's too much good free stuff (e.g. Rebol finally went free software, least it wallow in obscurity forever).

jjmpeg also contributes a steady stream. Given how many downloads there have been i'm a bit, i dunno, bummed i guess, about how little correspondence has been entered into regarding the work. Free Software for most just seems to mean 'free to take'. Still, I made some progress with the Android player yesterday so it might actually become useful enough to me at some point to be worth working on.

The image processing and computer vision posts have picked up a bit in the last few months, although i'm sure most of the hits are people just looking for working solutions or finished homework, or how to use OpenCV. I hate OpenCV so don't ask me!

I get a good response to any NEON posts containing code - although I haven't put too many up at this point. That's always fun to work on because one only ever considers fairly small problems that can be solved in a few days, keeping it fresh.

I even get a few hits on the cooking posts although I wish the hot sauces had more interest - because I think they're pretty unique and very nice.

The future

So I mentioned earlier how Free Software just seems to mean "free to take" - i certainly have no idea who is using any of the code I put out apart from the Kobo touch software using the toolkit/backend from ReaderZ - which I only found out by accident. Although the code is out there for this very reason, it's a bit disappointing that there is almost zero feedback or any indication where the code is being used - and i'm sure for example that jjmpeg is being used somewhere.

Once you hit a certain point in developing something I find the fun goes out of it - problems get too big to handle casually, earlier design mistakes require big rewrites, and unless you're using the software in anger there's no real reason to work on it at all after the initial fun phase. A lot of the stuff I have sitting in public has hit that point for me and it's not like i'm using most of the applications I come up with (or would even if I finished them).

For me it's about the journey and not the destination. And particularly for anything I work on in my spare time it has to be fun or what's the point? I already get paid to work on software, I don't want to "work" on it for free as well.

It's just for fun

Not sure where i'm going here ... I guess I will amble along and keep doing what i'm doing, mucking about with whatever takes my fancy or is interesting at the time.

So probably not much will change then.

Copyright (C) 2019 Michael Zucchi, All Rights Reserved.

Powered by gcc & me!