About Me

Michael Zucchi

B.E. (Comp. Sys. Eng.)

also known as Zed

to his mates & enemies!

< notzed at gmail >

< fosstodon.org/@notzed >

threads, memory, database, service.

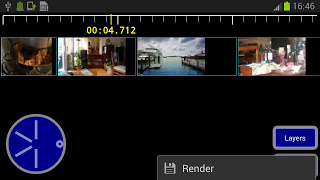

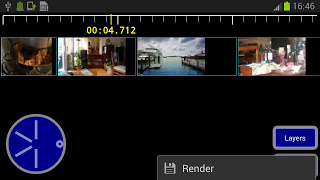

I hooked up the video generator to the jjjay interface last night and did some playing around on a phone form factor.

The video reading and composing was pretty slow, so I separated them into other threads and have them prepare frames in advance. With those changes frames are generally ready in Bitmap form before they're needed, and 80% of the main thread is occupied with the output codec. This allowed me to implement a better frame selection scheme that would let me implement some simple frame interpolation for timebase correction. The frame consumer keeps track of two frames at once, each bracketing the current timestamp. Currently it then chooses the lower-but-in-range one. The frame producer just spits out frames into a blocking queue from another thread - before I had some nasty pull logic and nearby-frame cache, but that is the kind of dumb design decision one makes in design-as-you-go prototype code written at some funny hour of the night.

I guess to be practical at higher resolutions hardware encoding will be necessary, but at SD resolution it isn't too bad. VGA @ 25fps encodes around 1-2x realtime on a quad-core phone, depending mostly on the source material. I think that's liveable. 1280x720 x264 at 1Mb/s was about 1/4 realtime. I suppose I should investigate adding libx264 to the build too.

I cleaned up some of the frame copying and so on by copying the AVFrame directly to a Bitmap, it still needs to use some pretty slow software YUV conversion but that's an issue for another day. Memory use exploded once I started decoding frames in other threads which was puzzling because it should've been an improvement from the previous iteration where all codecs were opened for all clips in the whole scene. But maybe I just didn't look at the numbers. I guess when you do the sums 5x HDxRGBA frames adds up pretty quick, so I reduced the buffering.

It was fun to finally get some output from the full interface, and as mentioned in the last post helps expose the usability issues. I had to play a bit with the interface to make the phone fit better, but i'm still not particularly happy with the sequence editor - it's just hard fitting enough information on the screen at a reasonable size in a usable manner. I changed the database schema so that clips are global rather than per project, and create and store clip/video icons along with the data.

So with a bit more consolidation to add a couple of essential features it'll approach an alpha state. Things like the rendering need to be moved to a service as well. And work out the build ... ugh.

At least I have worked out a (slightly hacked up) way to use jjmpeg from another Android project without too much pain. It involves some copying of files but softlinks would probably work too (jjmpeg only builds on a GNU system so I don't think that's a big problem). Essentially jjmpeg-core.jar is copied to the libs directory and libjjmpegNNN.so is referenced as a pre-build library in the projects own jni/Android.mk. If I created an android library project for jjmpeg-core this could mostly be automatic (apart from the make inside jni).

Incidentally as part of this I've used the on-phone camera to take some test shots - really pretty disappointed with the quality. Phone's still have a long way to go in that department. I can forgive a 100$ chinese tablet and it's front-facing camera for worn-out-VHS quality, but a 800$ phone? Using adb logcat on this 'updated, western phone' is also very frustrating - it's full of debug spew from the system and bundled software which makes it hard to filter out the useful stuff - cyanogen on the tablet is far quieter.

Rounding to the nearest day jjjay is about 5 days work so far.

Attached Bitmaps

On an unrelated note, I was working on updating a bitmap from an algorithm in a background thread, and this caused lots of crashes. Given it's 3KLOC of C+Assembly one generally suspects the C ... But it turned out just to be the greyscale to rgba conversion code which I just hacked up in Java for prototype purposes.

Android seems to do OpenGL stuff when you try to load the pixels on an attached Bitmap, and when done from another thread in Java, things get screwed up.

However ... it only seems to care if you do it from Java.

By changing the code to update the Bitmap from the JNI code - which involves a/an locking/unlocking step it all seems good.

Of course as a side-effect simply moving the greyscale to RGBA conversion to a C loop made it run about 10x faster too. Dalvik pretty much sucks for performance.

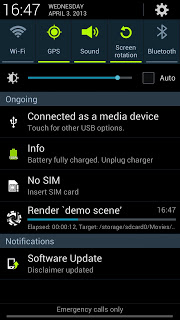

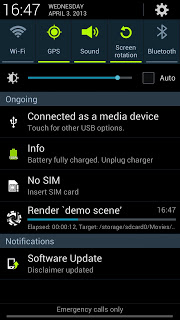

Update: Well add a couple more hours to the development. I just moved the rendering task to a Service, which was overall easier than I remembered dealing with Services last time. I guess it helps when you have your own code to look at and maybe after doing it enough times you learn what is unnecessary fluff. Took me too long to get the Intent-on-finished working (you can play the result video), I wasn't writing the file to a public location.

I cleaned up the interface a bit and moved "render" to a menu item - it's not something you want to press accidentally.

So yeah it does the rendering in a service, one job at a time via a thread pool, provides a notification thing with a progress bar, and once it's finished you can click on it to play the video. You know, all the mod-cons we've come to expect.

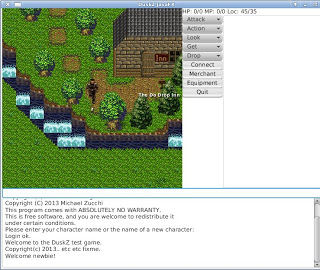

Animated tiles & bigger sprites

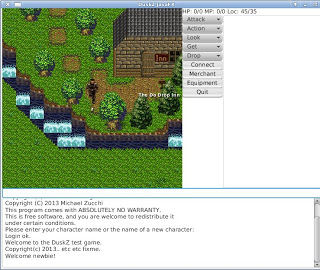

I haven't really had much energy left to play with DuskZ for a while (with work and the android video editor prototype), but after some prompting from the graphics guy I thought i'd have a go at some low-hanging fruit. One thing I've noticed from my customer at work is that if they casually mention something more than twice, they're more than casually interested in it ...

Mostly based on this code idea I hacked up a very simple prototype of adding animated tiles to Dusk.

One issue I thought I might have is with trying to keep a global sync so that moving (which re-renders all the tiles currently) doesn't reset the animation. Solution? Well just leave one animator running and change the set of ImageView's it works with instead of creating a new animator every time.

i.e. piece of piss.

The graphics artist also thought that scaling the player sprites might be a bit of work, but that was just a 3 line change: delete the code that scaled the ImageView, and adjust the origin to suit the new size.

Obviously one cannot see the animation here, but it's flipping between the base water tile and the 'waterfall' tile, in a rather annoying way reminiscent of Geocities in the days of the flashing tag, although it's reliably keeping regular time. And z suddenly got a lot bigger. To do a better demo I would need to render with a separate water layer and have a better water animation.

This version of the animator class needs to be instantiated for each type of animated tile. e.g. one for water, one for fire, or whatever, but then it updates all the visible tiles at once.

public class TileAnimator extends Transition {

Rectangle2D[] viewports;

List<ImageView> nodes;

public TileAnimator(List<ImageView> nodes, Duration duration, Rectangle2D[] images) {

setCycleDuration(duration);

this.viewports = images;

this.nodes = nodes;

}

public void setNodes(List<ImageView> nodes) {

this.nodes = nodes;

}

protected void interpolate(double d) {

int index = Math.min(viewports.length - 1, (int) (d * viewports.length));

for (ImageView node : nodes)

node.setViewport(viewports[index]);

}

}

JavaFX really does most of the work, all the animator is doing is changing the texture coordinates around. The hardest part, if you can call it that, will be defining and communicating the animation meta-data to the client code.

A bit more on jjj

I spent a few spare hours yesterday and today poking at some of the other basic functions needed for an android video editor. First a list of icons that loads asynchronously and then some database stuff.

The asynchronous loading with caching was more involved than i'd hoped but I have it (mostly) working. Unfortunately Android calls getView(0) an awful lot, and often on a view which isn't actually used to show the content, so trying to match views to latent requests doesn't always work (for view 0 only).

I've done something like this before using a thread and request handling queue, but this time I tried just using a threadpool and futures. I tested it by loading individually offset frames from a mp4 file - i.e. it's pretty slow - and the caching works fairly well, i.e. it drops starting of processing frames you've scrolled away from so that the screen refreshes relatively efficiently. The GUI remains smooth and responsive. Apart from the item-0 problem it would be perfectly adequate. And in practice the images would just be loaded from a pre-recorded jpeg so most of the loading latency would vanish.

After that I came up with a fairly reusable class which lets me add 'async image loading and caching' to any list or gridview, which is a necessity on Android to save on memory requirements. I used it to create a graphical clip selector in only a few lines of code.

And today I filled out another big chunk - creating a database to store everything. I tried to keep it as simple as possible but it's still fairly involved. I was thinking of using Lucene just as an index, but then I decided I really did want the referential integrity guaranteed by using Berkeley DB JE. So I used that instead. It's got a really great API for working with POJO's and it's pretty simple to set up complex relational databases using annotations. The only real drawback of using itis that if you need to write complex joins and so on you have to code them by hand: but I don't need them here, and besides often the complex joinery in SQL means you have to write messy code to handle it anyway. It's not something I miss having to deal with.

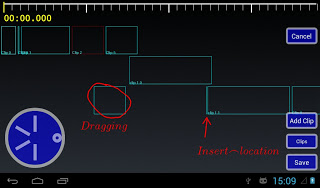

Then I further hacked up the hackish sequence editor to fit in with the DB backend. Actually I hacked up quite a bit of glue to tie together all the GUI work I have done so far:

- Projects

- The main window is a project window, from which you browse the projects, and create another one. Clicking on a project jumps straight into the scene editor. I'm using a AsyncTaskLoader to open the database which can take a second or so.

A project will have a collection of scenes and probably an output format. Although initially I am only implementing it using a single scene otherwise it seems to add too much complication to the interface.

- Scene

- This is currently the sequence editor as mentioned in the last post. Tracks/layers can be edited using drag and drop. Clicking on the "add clip" brings up the Clip manager, and after a clip is selected it is added to the scene. Changes are persisted to the database as they occur.

- Clip manager

- This brings up a list of clips which have been defined for the given project. Clicking on a clip just selects it and returns it to the caller (i.e. the Scene). There is also an "add" menu item which then brings up a file requester (using the standard Android Intent for "get content") which lets you choose a video file. Once one is selected, it then jumps to the Clip editor.

The database tracks video files used in a separate table, but i'm not sure adding a separate GUI for that is terribly useful - just clutter really. But the table will be used if I want to transcode videos into a quick-editing format.

- Clip Editor

- And this is the first interface I mentioned a couple of posts ago. It lets one mark a single region within a video file, and then save it as a clip. The clip is then saved back into the clipmanager.

Its surprising how much junk you have to write to get anything going. It's not like a command line programme where you can just add another switch in a couple of lines of code, you need a whole new activity, layout, intent management, response handling, blah blah etc etc.

So I guess at another two half-days that takes the total effort to about 4, although i should probably round it up to 5 being a bit more conservative. A few hours more to hook in the prototype renderer and I would have a basic video editor done - not bad for a weekend hack, even if it was a particularly super-long weekend ;-). There are of course some fairly important features missing such as transitions and animations and so forth.

Although having it at this level of integration also begins to show up all the usability issues and edge-cases i've ignored until now. It is also where one can investigate alternatives fairly cheaply: e.g. does it really work having clips per-project, or should they be a globally available resource library? This is what prototypes are for, after-all ...

It's also starting to approach the point where the hacking for entertainment starts to turn south; maintaining and fixing vs exploring and creating.

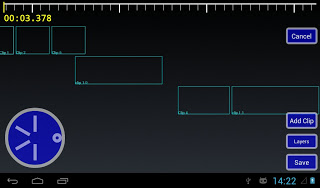

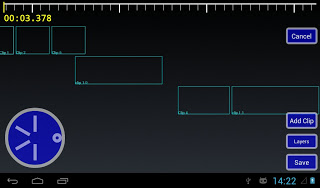

jjj sequence editor

Sometimes I hate it when I have ideas in my head and they just wont go. Last night I was getting bored with TV and kept thinking about a sequence editor - the most complex single interface component I'm after - so I fired up the computer and hacked from 11pm till 3am or so. The the less sleep I get the more "anxious to get shit done" I seem to be, until I fall apart.

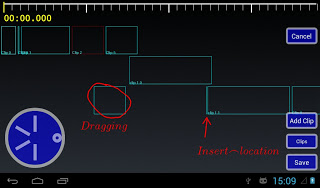

So although it's not much to look at yet - and the code behind it is even worse - last night together with a few hours today I came up with the following interface and all the guts to make it work.

I'm not sure if i need the jog-wheel there as the functionality is provided by the scale too, but it lets one move the timeline forwards/backwards. I also doubt i need to support any-number-of-layers, but I suppose I may as well - once you have more than one, any number isn't much harder.

The scale at the top can be dragged - it acts like a scroll-bar, or two fingers pressed on it can be used to zoom in and out.

The main sequence interface is modal, in that you are either working with 'layers' (or 'tracks'?) or clips. The "Layers" button is a toggle which switches modes, although I think I need a better interface design for that.

I don't have animations (i'm kind of up in the air on them as most of the time they just piss me off with their added delay) but the clips can be "drag and dropped" around the sequence in fairly obvious ways. So for example dragging a clip creates a layer-locked box which follows your finger, and a | indicator where it can be inserted. Letting go moves the clip to the new location, creating new layers as required.

Layers work similarly, except layers can be re-arranged, and the start position for the layer can be altered. All clips within a layer always run one after another. Doing things like moving the first clip causes the layer origin to be moved so the rest of the content isn't changed and so on, so it kind of 'just works' like you'd expect.

I have a basic single-item selection mechanism too, for deleting or other item-sensitive operations (e.g. where does 'add clip' add?).

So although the code is a complete pigs breakfast it is quite functional and stable, and with a bit of styling and frame graphics it's almost feature complete. Probably the last major functionality missing would be some start/end 'snapping' - but that's more of a nice-to-have.

Compositor too

Yesterday afternoon - when I was supposed to be relaxing - I worked on a prototype of a compositing and video creation engine. Initially I tried to use the ImageZ approach of compositing in floats, row-by-row, but android's java just isn't fast enough to make that practical. So I fell back to just using android's Canvas interface as the compositor. I guess it makes more sense anyway as it gives me a lot of functionality for free. I could get more performance bypassing it, but it's going to be a great deal of work. Obviously, I didn't attempt audio.

Initially I just wanted to see how performance was, but I ended up adding video and text "sources", which can be mixed/matched together. Performance is ... well it's ok, it's just a mobile platform with a purely CPU pipeline. A 10 second 800x600@25fps MP4 medium-quality clip takes about 20 seconds to decode+render+encode on the *ainol elf 2. For the compositor, about half the time is spent in decoding the source material and half spent in compositing, which isn't exactly flash - but it's all bytebuffers and Bitmaps and copying shit around multiple times so it's hardly surprising.

As usual the ideas in my head exploded yesterday and I thought about all sorts things: ways to create an interesting and usable interface, keyframe animation of clips and properties, multiple 'use modes' such as a simple video cutter, potential local client/server operation, using your pc to do the rendering, or using the tablet as an interactive control surface for a pc based application. But whether i'll have the time and motivation to see any of it through is another matter.

If i remember to, I'm going to try to track how much time i've spent on this project, so far it's about 3 days. I initially thought I could do a quick-and-dirty "weekend hack" and see how far I got and just leave it at that, and then thought the better of it. It's a 5-day long weekend for me so that's too much like hard work!

jj .. jay?

Time to drop another ``prototype-bomb'' on the unsuspecting world ..

Ultimate goal is some sort of simple-to-use video editor, but to begin with there are a lot of user interface and algorithmic problems to work out. I tried to find some simple editors in the android store but they pretty much sucked - based on server processing, questionable advertising, difficult and confusing to use, and usually quite slow.

However, a few hours hacking so far and I have a fully functioning "clip" editor, which is one of the basic components required.

I've actually been meaning to play with something like this for quite a long time, and finally the planets aligned such that it was an opportune time to look into it (nothing to do with any internet goings ons). I will probably also dabble in a JavaFX version if I get anywhere with it; although the interface requirements there are very different.

jjmpeg android hardware decoding

I somewhat embarassingly just discovered that FFmpeg already has some support for using libstagefright for hardware video decoding on Android.

I always thought this was the place it should go, since mucking around with OMX or other junk is just such a pain, and it belongs there.

Bit of a headfuck getting it to compile, particularly since this adds a C++ dependency, and then after all that ... it just crashes.

I/ffmpeg (10484): Assertion i < avci->buffer_count failed at

/home/notzed/svn/jjmpeg-1.0/jjmpeg-core/jni/ffmpeg-1.0/libavcodec/utils.c:603

F/libc (10484): Fatal signal 11 (SIGSEGV) at 0xdeadbaad (code=1), thread 10607 (VideoDecoder)

I/DEBUG ( 1894): *** *** *** *** *** *** *** *** *** *** *** *** *** *** *** ***

I/DEBUG ( 1894): Build fingerprint: 'samsung/m0zs/m0:4.1.2/JZO54K/I9300ZSEMB1:user/release-keys'

I/DEBUG ( 1894): pid: 10484, tid: 10607, name: VideoDecoder >>> au.notzed.jjmpeg <<<

I/DEBUG ( 1894): signal 11 (SIGSEGV), code 1 (SEGV_MAPERR), fault addr deadbaad

I/DEBUG ( 1894): r0 00000027 r1 deadbaad r2 40126b0c r3 00000000

I/DEBUG ( 1894): r4 00000000 r5 620f2a04 r6 60bfcc00 r7 61d0b8b0

I/DEBUG ( 1894): r8 5b127c80 r9 61bd5718 sl 000104d5 fp 61bd5718

I/DEBUG ( 1894): ip 5dc4bcbc sp 620f2a00 lr 400f8c65 pc 400f52fe cpsr 60000030

I/DEBUG ( 1894): d0 0000000200000000 d1 4b0000004b000021

I/DEBUG ( 1894): d2 000000094b000021 d3 0000000000000000

I/DEBUG ( 1894): d4 3ce5999061da5385 d5 3f34e1653f349a33

I/DEBUG ( 1894): d6 3f35287b3f356f75 d7 0106999ec0000000

I/DEBUG ( 1894): d8 0000000000000000 d9 0000000000000000

I/DEBUG ( 1894): d10 0000000000000000 d11 0000000000000000

I/DEBUG ( 1894): d12 0000000000000000 d13 0000000000000000

I/DEBUG ( 1894): d14 0000000000000000 d15 0000000000000000

I/DEBUG ( 1894): d16 8000000000000000 d17 ffffffffffffffff

I/DEBUG ( 1894): d18 416347d4c0000000 d19 3fe0000000000000

I/DEBUG ( 1894): d20 3fe0000000000870 d21 0000000000000000

I/DEBUG ( 1894): d22 0000000000000000 d23 0000000000000000

I/DEBUG ( 1894): d24 0000000000000000 d25 0000000000000000

I/DEBUG ( 1894): d26 0000000000000000 d27 0000000000000000

I/DEBUG ( 1894): d28 3f3504f3bf3504f3 d29 bf3504f33f3504f3

I/DEBUG ( 1894): d30 0000000000000000 d31 3f3504f33f3504f3

I/DEBUG ( 1894): scr 20000010

So yeah, i dunno. Perhaps it is a bug in jjplayer, but I tried removing all output handling, and it still just crashes inside codec->decode(), and the api to that point is so simple I don't think I can screw it up.

I've wasted half a day on this and it's losing it's interest very fast ...

Update: So, just when I was about to give up, I found a threading bug in the codec implementation. This at least lets it decode more frames but it's still crashing later on inside the GLES library.

I haven't got the NV21 image format working properly yet, so it may be related to that, maybe.

Update: Well that was pretty much a full day down the drain, I submitted a patch to the ffmpeg-devel mailing list (yay, subscribe to a high volume list of zero interest to me just to submit a 10 line patch).

It turned out that the file I was testing against refuses to play at all in the bundled players, so it's something to do with the hardware/firmware. At best they load the first frame then abort, although they don't segfault (probably the parser/demux stops it going as far as the codec).

On a video recorded from the camera it seems to work ok, although it certainly doesn't appear particularly smooth - I would need to do some timings to see what's going on. I haven't written the NV21 support either. And the build stuff needs cleaning up and parameterising before I can check it in.

If it's going to still be so useless i'm not sure I really care to be honest. And I've had more than enough for today so whatever I decide will have to wait.

duckduckgo

I've decided that i'll be giving duckduckgo a 'go' for a while for my search.

I've been an extremely heavy user of google's search for many years, and it is an indispensible tool for saving time. But i'm getting sick of it trying to be 'smarter than me' - I pretty much have to manually tell it to do a verbatim search every fucking time I search for anything technical (i.e. almost every search) which makes every new search that bit more tedious and frustrating than it already is. And given their spate of recent prunings who knows how long that will even last.

And just in the last couple of days they seem to have fucked around with it even more: i get 4-5 results and then a 'similar searches' section, which I do not find useful whatsoever. Sadly I often find searches I do pointing back to my own posts or code, which indicates to me that perhaps I really am a beautiful and unique snowflake after-all, and subsequently knowing what is "popular" isn't terribly useful to me.

Targetted search based on seeker popularity by default really seems a pretty strange feature unless you're writing a search engine for pop-culture or a shop (insight: oh hang on, that must be exactly what they are doing! silly me). Searches should first be for facts, not for reinforcing currently popular interests or fasionable trends. I already felt extremely uncomfortable with the fact that google search was customising results at all, but this is so much worse. If you went to a researcher and asked for detail on a topic, would you want them to take into account both your appearance and other customer's desires when they fulfilled the request, or would you prefer an unbiased, objective collection of all available information?

I guess those of us working niche fields or with niche interestes are going to have to get used to the fact that we are simply not enough of a product-base for Google to care about (remembering we are the product). They don't really care if they lose us because their customers don't really care if google loses us. Based on "The story so far", I think we can expect to see more of a mainstream/pop/fashion based focus to their products, which is very sad turn of events. With the closing down of various networked services, we're also going to start to lose trust in their long-term commitment to some of them. Surely blogger will not live outside of Google+ for much longer (tbh, not sure i care, so long as google+ is linkable and crawlable by any other search engine), and what about google code?

I also finally worked out one major reason why firefox was constantly using a good chunk of cpu time: apart from adverts and other annoyingly pointless crap, the main culprit was simply the google search results page. Which I find quite baffling because there are no visually moving parts whatsoever. I really wish firefox would simple disable javascript on tabs you're not currently looking at, because all it does is let crappy web page coders make firefox look like a bloated piece of shit, even if it isn't really.

Duckduckgo is certainly more concise, perhaps overly so to the point of missing relavant information, so it may not fullfill my requirements. A search for jjmpeg only finds 1 post from this blog for instance, and I often prefer to see multiple in-site references (perhaps it is an option: at this point i've used it only a few times). But i'll see how it goes, my search needs vary depending on what i'm doing. I just wish it paged the results using numbers mind you, I'm using the html version since the infinitely scrolling "Web 2.1.x(tm)" page completely sucks: as they all do.

Bummed out, or am i?

This week I've been experimenting with the performance of some NEON code. It is from an algorithm which was developed in OpenCL for desktop GPUs and then downscaled to fit on a beagleboard (only for development purposes). The overall algorithm is identical but the way some of the steps are implemented is different (and for some significant components much less computationally and bandwidth intensive).

The OpenCL code took many months to develop - although that included dead-ends, multiple steps if refinement, and other distractions including completely unrelated work. Even with that, I'd put the effort at around 4-10x that for the NEON code.

The NEON code took a few weeks. It obviously helped immensely that the algorithm was primarily known in advance although the downscaling alterations were not. On the other hand, my total experience with NEON is far less than OpenCL and certainly C or Java in terms of hours.

One reason the NEON code was much easier to write is that because as it is so cheap to invoke, one can just concentrate on the bottlenecks, and leave the housekeeping to C. e.g. I can write a routine that processes as little as 16x16 pixels in assembly, and leave the addressing crap to C. There is also no marshalling or other api binding to worry about: the C is plain C, and the assembly is plain assembly, and even though JOCL is far far better than using the C api directly it's still quite a bit of work. As much fun as OpenCL is, it's even more fun hacking NEON because you can concentrate on the fun bits even more.

Although the OpenCL model is also based on simple kernels which should equally be simple and isolated - it isn't really quite like that in practice. All but the simplest of kernels end up turn into 64-way parallel subroutines utilising LDS, barriers, and so on. Without that you end up leaving skads of performance on the floor, so it really is necessary. Not to mention all the marshalling and boilerplate in the host-code to communicate with it. And because of the marshalling and invocation latencies pretty much everything is forced onto the GPU.

So what's the point i'm getting at?

Well after all that, the projected performance on the previously-latest-version of a popular handset is only about 5x slower than a HD7970 on a pretty beefy desktop!

Yes that 5x speedup is important enough that it is worth it and opens it up to more applications, but on a personal level i'm just totally bummed it isn't much more. It's a highly parallel and bandwidth intensive workload which should be well-suited to a GPU. Obviously opencl has the advantage that it isn't tied to a single bit of hardware. It's a pity SSE sucks so much otherwise it would be interesting to see how a desktop cpu fared on it's own.

I plan to "back port" the algorithms so it can be improved on the GPU, but I have a fairly educated feeling that another 2000% performance isn't very likely. I will also need to use some AMD proprietary extensions, so the portability will suffer too.

I'm sure I can improve it, but I just take it as a big personal slap in the face for all the effort that's gone into it so far!

Of course, the alternative view is that ARM+NEON is the bees knees - with less effort i'm getting relatively great performance. But we all knew that so it isn't such a revelation ...

The main bottleneck on ARM cpus at the moment is the memory, and if you can utilise the cache effectively it really flies. I would really like to see how a beagleboard like machine with big/little A15/A7 quad core and much faster memory would fare, all these cheap android dongles are far too constrained by their form-factor.

Update: Well I might need to eat my words here. Today I started to look at GPU optimisations based on what i'd learnt from the ARM experience and trying to reduce the bottlenecks of the GPU code.

The key word of the day: batching.

One reason I wasn't previously batching the processing is because it didn't really fit the data-flow of an earlier application. But I have now achieved something like a 50x boost in one key algorithm by a combination of batching the work more aggressively and some other significant algorithmic changes.

This is more like it. No longer bummed out ...

Copyright (C) 2019 Michael Zucchi, All Rights Reserved.

Powered by gcc & me!