About Me

Michael Zucchi

B.E. (Comp. Sys. Eng.)

also known as Zed

to his mates & enemies!

< notzed at gmail >

< fosstodon.org/@notzed >

parallel batch sorting

Had what was supposed to be a 'quick look' at converting the GA code to OpenCL from the last post. Got stuck at the first hurdle - sorting the classifier scores.

I wasn't going to be suckered into working on a 'good' solution, just one that worked. But the code I had just didn't seem fast enough compared to single-threaded Java Arrays.sort() (only 16x) so I ended up digging deeper.

For starters I was using Batcher's sort, which although it is parallel it turns out it doesn't utilise the stream units very well in all passes and particuarly badly in the naive way I had extended it to sorting larger numbers of items than the number of work items in the work-group. I think I just used Batchers because I got it to work at some point, and IIRC took it from Knuth.

So I looked into bitonic sort - unfortunately the "pseudocode" on the wikipedia page is pretty much useless because it has some recursive python - which is about as useful for demonstrating massive concurrency as a used bit of toilet paper. The pictures helped though.

So I started from first principles and wrote down the comparison pairs as binary and worked out how to reasonably cheaply create compare-and-swap index pairs from sequential work item id's.

For an 8-element sort, the compare-and-swap pairs are:

pass,step 0,1

pair binary flip comparison

0-1 000 001 0

2-3 010 011 1

4-5 100 101 0

6-7 110 111 1

pass 1,2

pair binary flip comparison

0-2 000 010 0

1-3 001 011 0

4-6 100 110 1

5-7 101 111 1

(pass 1,1 is same as pass 0,1)

pass 2,4

pair binary flip comparison

0-4 000 100 0

1-5 001 101 0

2-6 010 110 0

3-7 011 111 0

(pass 2,2 is same as pass 1,2)

(pass 1,1 is same as pass 0,1)

One notices that bit 'pass' is all 0 for one element of comparison, and all 1 for the other one. The other bits simply increment naturally and are just the work item index with a bit inserted. The flip rule is always the first bit after the inserted bit.

In short:

lid = local work item id;

array = base address of array;

for (bit=0; (1<<bit) < N; bit++) {

for (b=bit; b >= 0; b--) {

// insertion masks for bits

int upper = ~0 << (b+1);

int lower = (1 << b)-1;

// extract 'flip' indicator - 1 means use opposite sense

int flip = (lid >> bit) & 1;

// insert a 0 bit at bit position 'b' into the index

int p0 = ((lid << 1) & upper) | (lid & lower);

// insert a 1 bit at the same place

int p1 = p0 | (1 << b);

swap_if(array, p0, p1, compare(p0, p1) ^ flip);

}

}

The nice thing about this is that all accesses are sequential within the given block, and the sort sizes decrement within each sub-group. Which leads to the next little optimisation ...

Basically once the block size (1<<b) is the same size as the local work group instead of going wide the work topology changes to running in blocks of work-size across. This allows one to finish all the lower stages entirerly via LDS memory. Although this approach is also much more 'cache friendly' than full passes at each offset, LDS just has a much higher bandwidth than cache. Each block is acessed through the LDS via a workgroup-wide coalesced read/write and an added bonus is that for the larger sized blocks all memory accesses are fully sequential across the whole work-group - i.e. are also fully coalesced. It might even be a reasonable merge sort algorithm for a serial processor: although I think the address arithmetic will be too expensive in that case unless the given cpu has an insert-bit instruction that makes room at the same time.

On closer inspection there may be another minor optimisation for the first LDS based stage - it could perform the previous stage in two parts whilst still retaining full-width reads using a register.

I'm testing by sorting 200 arrays, each with 8192 integers (which is what i need for the task at hand). I'm using a single workgroup per array. Radeon HD 7970 original version.

algorithm time

batchers 8.1ms

bitonic 7.6ms

bitonic + lds 2.7ms

java sort 80.0ms (1 thread, initialised with random values)

Nice!

I think? Sorting isn't something i've looked into very deeply on a GPU so the numbers might be completely mediocre. In any event it's interesting that the bitonic sort didn't really make much difference until I utilised the LDS because although it requires fewer comparisions it still needs the same number of passes over the data. Apart from that I couldn't see a way to utilise LDS effectively with the Batchers' sort algorithm I was using whereas with bitonic it just falls out automagically.

Got a roast lamb in the oven and cracked a bottle of red, so i'm off outside to enjoy the perfect xmas weather - it's sunny, 30-something, with a very light breeze. I had enough of family this year so i'm going it alone - i'll catch up with friends another day and family in a couple of weeks.

Merry X-mas!

Update: See also a follow-up where I explore extension to non-powers of two elements.

genetic algorithm experiments

Last couple of days I was playing with some genetic algorithm code to implement an object detector. This is the first time i've ever played with genetic algorithms so is simply exploration to become aquainted with the technique.

First I started with a local binary pattern histogram feature test based on the paper Genetic Based LBP Feature Extraction and Selection for Facial Recognition, but these were just too slow to evaluate to work out whether I was making any progress or not. It wasn't quite the problem I wanted to solve but I did have the paper and am familiar with the algorithm itself.

So I changed to using a variation of my own 'single-bit-histogram' algorithm which is much faster to evaluate both because the mechanics of it's implementation and the mathematics of the fitness determination. Because it generates a classifier and not a distance measure evaluation of fitness is O(N) rather than O(N^2). Initially my results seemed too good - and they were, a simple sort-order problem meant that a completely zero detector came out as perfect. After fixing that I did still have some promising results but my feature tests were just too small so the discriminative ability wasn't very high.

When I left that for the day I didn't think it was really working that well because the feature tests weren't descriptive enough on their own - only a few single probes. But I was looking at the output a bit wrong - it doesn't need to be 'good', it just needs to have a low false-negative rate. The next morning I tried creating a more complex descriptor based on a 4x4 feature test - and this worked much better.

The GA

After reading a few light tutorials on the subject I basically came up with my own sexual and asexual (i.e. copy with mutate) breeding rules and mucked about with the heurstics a bit. Throwing out years of research no doubt but without some understanding of how it works I wont understand it properly. So they are probably not ideal but they seem to work here.

The basic asexual breeding rule is:

- Randomly grow or shrink the number of genes by 1 (within a specific range);

- Copy genes, potentially mutating each bit separately;

- If the chromosome grew, add a new random gene.

And sexual breeding:

- Concatenate all genes of both parents;

- Randomly permute the whole set;

- Choose a new size which is half the combined gene length +/- 1 (within a specific range);

- Truncate to the new size;

- Randomly mutate every bit.

Genes are treated 'whole', and it obviously supports different numbers of genes for each individual chromsome. I found that a small number of tests seems to work better than a larger number; at least for the single-pixel classifier. As such i'm focusing at present on fixing the gene count in advance and running multiple tests to see what difference the gene count makes.

I tried a few different fitness tests:

- Order by area under ROC curve (or for simplicity, some approximation thereof);

- Order by rank of first incorrect result;

- Order by rank of last correct result.

These all result in slightly different classifiers - although the first tends to be the best. Combining a couple by simple concatenation also appears to create a better classifier.

Each generation i discard the worst-N results, and then randomly sexually or asexually reproduce random pairs or individuals to replace them. For simplicity i'm just using uniform randomness rather than basing reproduction rates on fitness. I presume at worst this just reduces the rate of advancement.

I need to read up a bit more on the nomclature and jargon used to describe the behaviour properly but with very small number of genes I tend to end up with poor genetic diversity at least in the top few results (I'm dumping the top 5 every 100 generations). I think my random mutation isn't random enough during breeding - it should always mutate at least some bits rather than applying a low randomisation rate across all of them.

Still, even with these shortcomings and the fact that after a rapid improvement progress slows - it doesn't stall completely. I ran some very long experiments and even after 10 hours I was still getting an occasional improvement.

The Classifier

I need to write up the classifier a bit better than a blog post so I wont go into the detail here. I think it's a novel use of local binary patterns.

But the current 4x4 'gene' consists of:

struct gene4x4 {

unsigned int x:5;

unsigned int y:5;

unsigned int pad:22;

unsigned int tests[8];

};

(x,y) is the location of the test, and the 16x16-bit integers stored in the tests array are the parameters. For the descriptive power it seems to have, this quite a compact descriptor. With a chromosome of as little as 2 of these genes i'm starting to get some interesting results, and at 4 genes I think i'm starting to get usable results. That's only 1024 bits; this is something that can easily fit on an epiphany core even with a good few stages.

Data

As with any machine learning algorithm dataset size and quality is another issue, but for now i'm just using what I have which is stuff i've extracted from Color FERET. The training set is the left (from front, i.e. right) eye at 32x16, and the negative training set is random samples from the same faces excluding those very close to the eye. I'm just using the same set to test against at the moment.

I think though that because of the similarity of the eyes and the fact that you can easily tell them apart using simple geometry I will look at combining left and right eye detectors into a simple 'eye detector'. This saves the learning algorithm the hassles of trying to distinguish between left and right eyes as well as non-eye data. If I really needed to distinguish between the two I could train another detector for sub-classification - to be honest, I can't really see why you would need to.

Next

One thing I want to do is translate the algorithm to OpenCL so I can accelerate the generation rate. I already have the classifier written and that can score images insanely fast - so fast that I think I need to write the whole algorithm in OpenCL now, which wasn't my original intention. Being able to run a few hours worth of testing in minutes really accelerates experimentation with the heurstics and other gene variations so it's worth the day or so of effort it needs to get working.

Then I want to investigate whether I can turn these classifiers into a reliable cascade of classifiers - which is a critical step for both runtime and quality performance. My first thoughts are to use the ROC curve to choose a threshold such that some of the false positives are culled (but no false negatives), remove those from the data-set and then train a new classifier; and repeat until one reaches a satisfactory result. A variation may be to also cull at the true-positive end of the classifier if there is a particularly high first-false-positive rank. My gut feeling so far is that because each classifier is not too weak it shouldn't need too many stages to be very strong; but whether this pans out in practice or not is another matter.

Then there are many things to try, from data improvements / different objects, to adding extra dimensions to the input data (e.g. multiple planes different lbp codes which test for different characterestics; somewhat analogous to multiple gabor wavelets). It should also be possible to directly create a multi-stage algorithm soley using GA - which may be worth investigating.

The ultimate aim is a strong classifier which is also computationally efficient (ahem, yeah, kinda holy grail territory here, i'm not ambitious at all). I know already that it can be implemented in ARM NEON assembly language very efficiently - under 1 clock cycle per pixel tested(!). It's also simple enough to put in hardware. I'm just not sure yet whether I can make it strong enough to compare to other algorithms, which is of course the big question.

On a side note it's kind of cool having the computer just work away by itself coming up with a solution given very simple goals. For once. It normally feels like it's the one driving me.

Of course, being xmas, and being on leave, ... I may just drink wine instead! And it's about time i put down another homebrew. And cleaned the house a bit. Time for another #3 all over too.

e-port revisited

I can't remember if i posted this here or just discussed it on the forums, but here's an update on the 'eport' code. eport is a lightweight 1:1 synchronisation point designed to assign owneship of slots in a cyclic buffer with minimum overhead. This directly allows the utilisation of some of the specific features in the epiphany architecture.

Since I can't find the post (probably just a bad subject) here's an overview of the api:

- int eport_reserve(eport *)

- Reserves a single slot in the port for the writer. The returned index is the slot number. Once this returns the writer has exclusive access to the given slot.

- void eport_post(eport *)

- Indicates that a slot previously reserved is now owned by the receiver with the implication that it contains valid data. Slots are automatically cycled in the same order they are reserved.

- int eport_wait(eport *)

- Waits for data to be ready at the receiving end. The return value indicates the slot number.

- void eport_done(eport *)

- Marks the next slot free to be re-used by the caller.

Ok so my initial implementation used power of two sizes for the indices for efficiency reasons of the cyclic buffer index calculation (modulo) and it only required 2 'pointer' values. However, because of repeating of the cycles one of the slots always sits unused, and furthermore the forcing of a power-of-two size can easily lead to needing to allocate more space than is necessary to implement an algorithm. There just isn't enough space to waste like this. An additional problem I hit when working on a real application was that the implementation required that every reserve be paired directly with a post, and every wait be paired directly with a done - which precluded the ability to use multiple slots in the buffer at once - which was kind of the whole point of it!

So I had a fiddle and came up with a new implementation which manages to allow all slots to be in use and also allows for arbitrary sizes without an expensive modulo operation. It only needs two extra local 'pointers' which are used to track multiple outstanding reserves/waits properly. If memory is tight the receiver can limit the working-set to exactly the amount it requires, or allow one extra free slot to allow for interleaving of work.

I've only just banged it up so it might not be correct or handle all the edge cases, but here it is anyway.

data structure

// Code Copyright (C) 2013 Michael Zucchi

// Licensed via GNU GPL version 3 or later.

struct eport {

volatile unsigned int head;

volatile unsigned int tail;

unsigned int index;

unsigned int next;

unsigned int size;

struct eport *other;

};

Each of these is allocated in pairs - one local to the sender and one local to the receiver. other pointers to the other one of the pair and everything else apart from size is initialised to 0. The only cross-communication is that the sender updates head in the receiver, and the receiver updates tail in the sender - obviously an atomic operation with no round-trip required.

index is local to each end and tracks the next slot, modulo the size of the cyclic buffer. Likewise next indicates the next 'actual' slot being requested at each end.

reserve

unsigned int eport_reserve(struct eport *port) {

unsigned int next = port->next;

// Check for a free slot

while (next >= port->tail + port->size) {

EPORT_SLEEP();

}

port->next = next + 1;

// Increment index modulo size

unsigned int r = port->index;

port->index = (r+1) == port->size ? 0 : r+1;

return r;

}

Because the "pointers" run without modulo I can simplify the capacity test. I then manually keep track of the index modulo the size for use by the caller. By using next to track the allocation it detaches the allocation from the publishing so it properly handles multiple outstanding reserves.

EPORT_SLEEP() does nothing on epiphany but might call usleep(x) on GNU/Linux.

post

void eport_post(struct eport *port) {

unsigned int h = (port->head + 1);

port->other->head = h;

port->head = h;

}

Post is basically the same as it was before, except now it doesn't need to modulo the pointer. Since the slot is known to be owned by the sender all it has to do is update the pointers at both ends. The sender is the only thread that can write to head so it needs no arbitration.

reserve

unsigned int eport_wait(struct eport *port) {

unsigned int next = port->next;

while (port->head == next)

EPORT_SLEEP();

port->next = next + 1;

// Track tail % size

unsigned int r = port->index;

port->index = (r+1) == port->size ? 0 : (r+1);

return r;

}

There is some obvious (and nice) symmetry with the reserve function here in the receiver. Again next is used to detatch acceptance from recycling.

done

void eport_done(struct eport *port) {

unsigned int t = (port->tail + 1);

port->other->tail = t;

port->tail = t;

}

Done is similarly symmetric to the post function. And here the receiver is the only one who can ever write to tail, so it needs to also needs no arbitration.

summary

So in short ... eport

- Allows for lock-free, ordered synchronisation of a fixed number of buffers between two cooperating cores;

- Allows for fully sychronous or n-buffered operation;

- Lightweight - only a single memory write is required at each post or done call.

When coupled with epiphany's blocking-free writes and limited memory it allows one to write streaming processors which write directly to the target core without needing any additional synchronisation and potentially asynchronously.

One drawback of the implementation above is that a given port is limited to 2^32-1 operations at a time before the maths breaks down, although that could be addressed by using 64-bit integers or some more complex pointer arithmetic.

I'm pretty much done for today but this is just another small step toward getting the 1-pass image scaler going. I think it should also end up a reasonable basis for writing a 1-pass 2-d separable convolution, wavelets, and so on. For some reason although i'm just a little bit of work away from finishing it I keep putting it off - today it's that the wind kept me awake all night and i'm too knackered to think straight. e.g. althogh i haven't compiled or debugged it I have the whole 2-d bicubic scaler written, I just need to slot in this updated eport code. Another day.

On another note i'm finally on xmas leave - hopefully for a few months like last year but I have a feeling i'm going to get roped in to doing a bit of other work which might cut it short. At this point i have a head full of ideas to code on but i think for sanity's sake I will need to take a break at some point too.

Wondered why the cartoons were on telly so late ...

Oops, it's early not late. Somehow it got to 5am, and now it's nearly 6.

I was looking up Welsh accent videos after watching 'Utopia' on SBS along with a bottle of red and a couple of rum on ices and somehow 6 hours vanished before I had realised. Probably didn't help that I only had cheese, olives, and other pickled condiments for dinner. It all seemed like a good idea at the time.

The last few weeks i've been working extra hours to try to burn out my contract before the xmas break so I was already a bit wiped out and I think I got a bit over-eager from wanting it to be over. Insomnia, poor sleep in general and even some annoying 'dreams' about bit reversal permute and vertical fft algorithms just compounded this. Got a meeting in a few hours - but it's only really a hand-off of pre-prepared work and i'm sure I can burn an iso or two.

I guess i'm just really looking forward to a couple months off and started a bit early. Just 16 hours left and all my main tasks are done for the contract and i'm pretty much over it at this point. But a big component is a few of those 'never ending' research-oriented tasks that have no defined completion milestones so I still have plenty to poke at.

Hmm, forcecast is 43 today - garden will be incinerated. It was forecast 41 yesterday and from the look of the plants I can't tell if i'm waterlogging them or letting them dry out too much - I dumped a few hours worth of rainwater tank on the garden trying to help it survive. Need some spot shades to stop the exteremeties burning off since it's a lot hotter than that in the full sun on the bare earth or on the black polyethelyne picking buckets I'm using as pots for the herbs. Last year I measured over 70 on a wheelie bin and that wasn't even in full sun all day. Even the birds are barely singing well after sun-up - must know what's coming in a few hours.

Better try to get a couple of zed's - can always sleep the arvo off. Damn eyes are killing me too as they have been for few weeks; bloody hayfever and i can't be far away from needing glasses.

Android icon lists

I was hitting some performance / usability issues with a list of icons (sigh, again) ... and looking at the code I came up last time I just don't know what I was thinking. In part it stemmed from initially using an adapter without customising the view; so i was forced to use a standard ImageView.

Anyway, I think ... i finally worked out a relatively clean solution to a listview which contains images which are loaded asynchronously.

In short:

- Create a sub-class of ImageView and use that wherever an icon in a list is used.

- Implement a separate loading/cache which loads images at the direction of this new ImageView via a thread. It needs to be able to throw away requests if they're not needed and generally serialise loading of images.

- Just set the image uri/url/file on the ImageView and let it handle aborting load of an image if it didn't use it.

- And this is the important bit which gets around an annoying problem with android: treat item 0 separately and just load it's icon directly w/o caching.

Without the last item you can end up loading the the image into the wrong imageview so end up with a missing icon - I recall having a lot of problems trying to workaround this last time, but this is a much more reliable and simple solution.

Previously I was trying to use notifyDataSetChanged() each time an image loaded as well as other nasty redirections to try and update properly whilst still coping with android (ab)using item 0 for it's own nefarious purposes (i presume it's for layout).

Originally i needed to load remote url's so I couldn't just use setBitmapURI() (otherwise i probably would've just used that at the time - it was a very short-on-time prototype), and although i currently don't need that functionality this manual code is smoother for the user and I can do other stuff like animations. The android code will delay drawing the screen until the image is actually loaded which adds a lot of judder particulalry if you're images might be large sizes.

Still not as nice as the JavaFX stuff I did, but it'll suffice. I will have to do something similar in the internode radio app - the solution there is different again but not very good either.

FFT bit-reverse, NEON

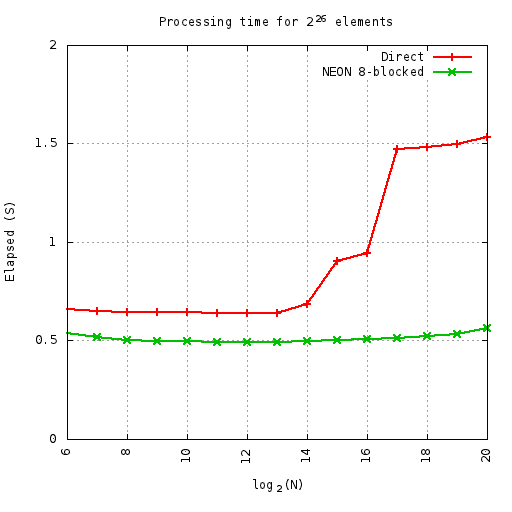

Hacked up a NEON version of the FFT permute I mentioned in the last post. Because it worked out much simpler to code up I went with the blocked-8 8-streaming version. Fortunately ARM has a bit-reverse instruction too.

Actually it ended up rather elegant; although i need to use 9 separate addresses within the inner loop, I only need to explicitly calculate one of them. The other 8 are calculated implicitly by a post-increment load thanks to being able to fit all 8 into separate registers. The destination address calculation still needs a bit reversal but 3 instructions are enough to calculate the target address and index to the next one.

This leads to a nice compact loop:

1: rbit r12,r11

add r11,r3

add r12,r1

vld1.64 { d16 },[r0]!

vld1.64 { d17 },[r4]!

vld1.64 { d18 },[r5]!

vld1.64 { d19 },[r6]!

vld1.64 { d20 },[r7]!

vld1.64 { d21 },[r8]!

vld1.64 { d22 },[r9]!

vld1.64 { d23 },[r10]!

subs r2,#1

vstmia r12, {d16-d23}

bne 1b

Despite this elegance and simplicity, compared to a straightforward C version the performance is only a bit better until the cache gets overflown.

At least on this machine (parallella-16 - the ARM side) the benefits of the cache-friendly code are measuable and start at 32K elements.

Here i'm runnning so many cycles of the given permute, where the number of cycles x N = 226 - i.e. always doing the same number of total elements. This equates to 1M iterations at 64-elements. All elements are complex float. This means the smaller sizes do more loops than the larger ones - so the small up-tick on the ends is first from extra outer loop overheads, and then from extra inner loop overheads.

Also hit a weird thing with the parallella compiler: by default gcc compiles in thumb mode rather than arm.

Update: So I found out why my profiling attempts were so broken on parallella - the cpu gouverner.

# echo performance > /sys/devices/system/cpu/cpu0/cpufreq/scaling_governor

Fixes that. It also makes emacs a lot smoother (I was wondering why text-mode emacs across a gig-e connection was lagging so much). I was running some timings on a C implementation of Cooley-Tukey and noticed some strange results - it turned out the scheduler was only kicking in once the problem got big enough so time-per-element turned into a saw-tooth.

Actually I re-ran the above plot and although the numbers did change a little bit they were only minor. Oh, I also added alignment specifiers to the vld1 instructions - which made a small but significant difference. I then tried doubling-up on the above function - forming 16 consecutive output cfloat results. But this was consistently slower - I guess hitting 2x the width on output is causing more cache thrashing (although i'm not sure why it's slower when running in-cache).

Some noise woke me up around 2am and I ended up getting up to have something to eat and then hacked until sunrise due to insomnia (pity Sunday is the mowing day around here - so now i'm grumpy and tired and need to do my own noise-making in the back yard soon). I did the timing stuff above and then looked into adding some extra processing to the permute function - i.e. the first couple of stages of the Cooley-Tukey algorithm. NEON has plenty of registers to manage 3 stages for 8 elements but it becomes a pain to shuffle the registers around to be able to take advantage of the wide instructions. So although i've nearly got a first cut done i'm losing interest (although simply being 'tired' makes it easy to become 'tired of' anything) - parallella will remove the need to fuck around with SIMD entirely, and there isn't much point working on a fast NEON FFT when ffts already exists (apart from the for-the-fun-of-it exploration). Well I do have a possible idea for a NEON implementation of a "vertical" FFT which I will eventually explore - the ability to perform the vertical portion of a 2D fft without a transpose could be a win. Because of the data-arrangement this should be easier to implement whilst still gaining the FLOP multiplier of SIMD but there are some other complications involved too.

FFT jiggery pokery

I've been meaning to poke at FFT calculation for a while and I finally got around to having a proper look.

First I just needed to calculate a single value so I did it by hand - I got some strange profiling results from Java vs jtransforms but it turned out that due to it's complexity it takes many many cycles before the JIT fully optimises it.

Then i started looking from a trivial Cooley-Tukey implementation that allocated all it's output arrays ... and i was surprised it was only about 1/2 the speed of jtransforms for it's simplicity. I tried a few variations and delved a bit into understanding the memory access patterns - although after an initial bit-reversed premute the memory patterns are trivial. It was still stuck at about 1/2 the speed of jtransforms.

I had a bit more of a think about the bit-reversing memory permute stage and figured it wouldn't work terribly well with cpu caches. First I just worked on blocking the output by 8 elements (=1 cache line) toward re-arranging the reads so they represent 8 sequential streams. Strangely enough this second case is a bit slower on small workloads that fit in the cache although is a bit faster on larger ones. It's not really much of a difference either way.

D:d |S:s | 0

D: d |S: s | 16

D: d |S: s | 8

D: d |S: s | 24

D: d |S: s | 4

D: d |S: s | 20

D: d |S: s | 12

D: d |S: s | 28

D: d |S: s | 2

D: d |S: s | 18

D: d |S: s | 10

D: d |S: s | 26

D: d |S: s | 6

D: d |S: s | 22

D: d |S: s | 14

D: d |S: s | 30

D: d |S: s | 1

D: d |S: s | 17

D: d |S: s | 9

D: d |S: s | 25

D: d |S: s | 5

D: d |S: s | 21

D: d |S: s | 13

D: d |S: s | 29

D: d |S: s | 3

D: d |S: s | 19

D: d |S: s | 11

D: d |S: s | 27

D: d |S: s | 7

D: d |S: s | 23

D: d |S: s | 15

D: d|S: s| 31

This shows the source-destination read/write pattern for a bit-reversal permute. Obviously with all those sparse reads the cache coherency isn't going to be great. FWIW moving from s to d (sequential writes) proved to have better performance than from d to s (sequential reads).

D:dddddddd |S:0 4 2 6 1 5 3 7 |

D: dddddddd |S: 0 4 2 6 1 5 3 7 |

D: dddddddd |S: 0 4 2 6 1 5 3 7 |

D: dddddddd|S: 0 4 2 6 1 5 3 7|

This is the same, but blocked to 8 elements in the output. Unfortunately there is still a large spread of reads.

D:dddddddd |S:0 4 2 6 1 5 3 7 |

D: dddddddd |S: 0 4 2 6 1 5 3 7 |

D: dddddddd |S: 0 4 2 6 1 5 3 7 |

D: dddddddd|S: 0 4 2 6 1 5 3 7|

This is an attempt at improving the previous one - although the reads are still in a broad spread, they are always confined to 8 sequential streams. It does improve the performance once you get a large enough problem, but on an x86 machine N needs to be over about 8M before it shows a minor improvement. I've yet to try it on ARM.

Then I realised that Integer.reverse() wasn't mapping to a single instruction on x86 - and subsequently over half of the processing time was spent just calculating the source index ... so I put in a bit of bit-fiddling to the 8-blocked implementations which precalculated the 8 fixed element offsets relative to base-offset. I also tried a lookup table for the non-blocked implementation which made a big difference. Strangely using a lookup-table to get the base offset actually slowed it down - suggesting that the jvm is pre-calculating reverse(i) in the outer-loop if it can.

Once this permute is done the memory access pattern is just in adjacent and sequential blocks - either depth or breadth first. The 8-blocked permute is about 130%-150% of the speed of a straight System.arraycopy() for 4-1K elements.

Actually another reason I worked toward an 8-blocked implementation was in order to perform the first 8-way fft stage at the same time as the fiddling - with that in place I got to within shouting distance (under 200% execution time) of jtransforms for some small fft sizes. I was curious what I had to do to get better than that so I tried hard-coding the last stage of a size-16 fft and managed to beat it - not that this has any real practical purpose mind you.

This gives me a starting point to tackle the problem on the parallella which has it's own challenges.

PS yes I found a book or two and many papers about both fft implementation and the permute step but I had time to experiment on my own.

Java, C, SSE, poor mans lambdas

So after being able to avoid them for decades I got sucked in to having to do some matrix maths this week. I'm mostly just using a library but there were some memory and performance problems so I had to investigate my own matrix multiply routine.

Java vs gcc

Mostly just because of curiosity I tried comparing C to Java ... and the performance difference was negligble, actually sometimes the java cpu time is neglibly less. And i'm timing executing the programme from the command line - so that includes jvm startup and just-in-time compilation. I expected more of a difference in the obvious direction given the jvm overheads so that was a nice surprise. I suppose I shouldn't really be surprised by the performance anymore ...

And then further curiosity led me to attempting a vector based implementation - just using the gcc vector types. This was only about 2.4x faster - it would be worth it worth it, but I just used threads and it works fast enough anyway.

The vector implementation in gcc is simple, one just defines a vector type and then it's much the same as OpenCL, although one must ensure the data is aligned properly otherwise performance is pants.

TBH i'm a little dissapointed hotspot isn't doing any SSE optimisations here automatically (or maybe it is, but the execution time compared to C is too close for it to be a coincidence).

WorkPool

To implement the multi-threaded code I started using a poor-mans implementation of lambdas, or at least the parallel foreach part of it which is imho the main point of interest. I'm waiting for the jdk8 ga before pushing any java8 requirement onto my customer. It doesn't work as well as the lambda code in jdk8 but it isn't too far off and the syntax is fine as far as i'm concerned.

For simplicity I just have a static class which manages one thread per cpu with a simple static foreach call:

public class WorkPool {

... threads stuff ...

public interface WorkItem {

public void accept(int i);

}

public static synchronized void foreach(int start, int end, WorkItem job) {

... statically partition work across threads ...

... pass job to threads ...

... await completion ...

}

With obvious usage:

WorkPool.foreach(0, N, new WorkItem() {

public void accept(int i) {

array[i] = blah ...;

}

});

It's a bit clumsy without the 'effectively final' of Java8, and arguably clumsy due to the syntax but if each WorkItem does a sizable amount of work the overheads are acceptable. More importantly it just gets me thinking about how to solve problems in ways that will map immediately to Java8 when I start using it.

There's some other interesting stuff about the parallel execution model that I want to talk about but i'll leave that for another post. It's about mapping non-rectilinear work loads to a linear index and job execution order.

On another note, I keep running into problems with the thread job management on Java and keep end up having to write custom solutions. Executors seem like a great idea ... and they probably are for enterprise workloads but they basically suck for interactive desktop programmes - where you may be getting many many more update requests than you have time to process but you only need to keep (and must keep) the last one. Managing this with Future handles gets clumsy very fast. JavaFX comes with a new set of 'worker thread' primitives which I haven't really looked into much - they seemed to be light wrappers over existing functionality anyway and only seemed to muddy the waters last time I had a look.

One current implementation of WorkPool uses a ThreadPool executor but I will look at custom thread code too (curiosity again). Currently i'm also implementing static scheduling but it will be worth investigating something more dynamic.

Copyright (C) 2019 Michael Zucchi, All Rights Reserved.

Powered by gcc & me!